1. Introduction — Why This Experiment Matters

For more than two decades, SEO has revolved around one central truth:

Older domains with strong backlink profiles perform better.

That belief has shaped how websites are built, how content is produced, and how authority is earned.

But with the rise of LLM-driven search engines—ChatGPT, Perplexity, Claude, and Google’s AI Overviews—an entirely different question emerges:

Do LLMs evaluate websites the same way Google does?

Or have the rules of ranking changed completely?

To answer this, we ran a controlled experiment:

We launched a brand-new domain with:

Domain registered: citationmatrix.com

Domain register date 23 Nov 2025

WordPress Installed – 25 Nov 2025

Topical Map Created using Cgtgpt – 25 Nov 2025

Content created chtgpt batch feature – 27 Nov 2025

Imported content to WordPress – 27,28 Nov 2025

Site submitted to GSC -1 Dec 2025

Imported content to WordPress – 27,28 Nov 2025

Site submitted to GSC -1 Dec 2025

- Full AI-Generated Content

We weren’t looking for “SEO results.”

We wanted to measure LLM results—how the AI search ecosystem interprets, recalls, ranks, and cites newly created content.

The results dismantled several long-held assumptions—and confirmed something groundbreaking:

2. Experiment Setup

To ensure the results were pure and uncontaminated by traditional SEO advantages, we followed a strict protocol.

2.1 Domain Conditions

- Brand-new domain (never registered before)

- No backlinks

- No mentions anywhere on the web

- No schema markup

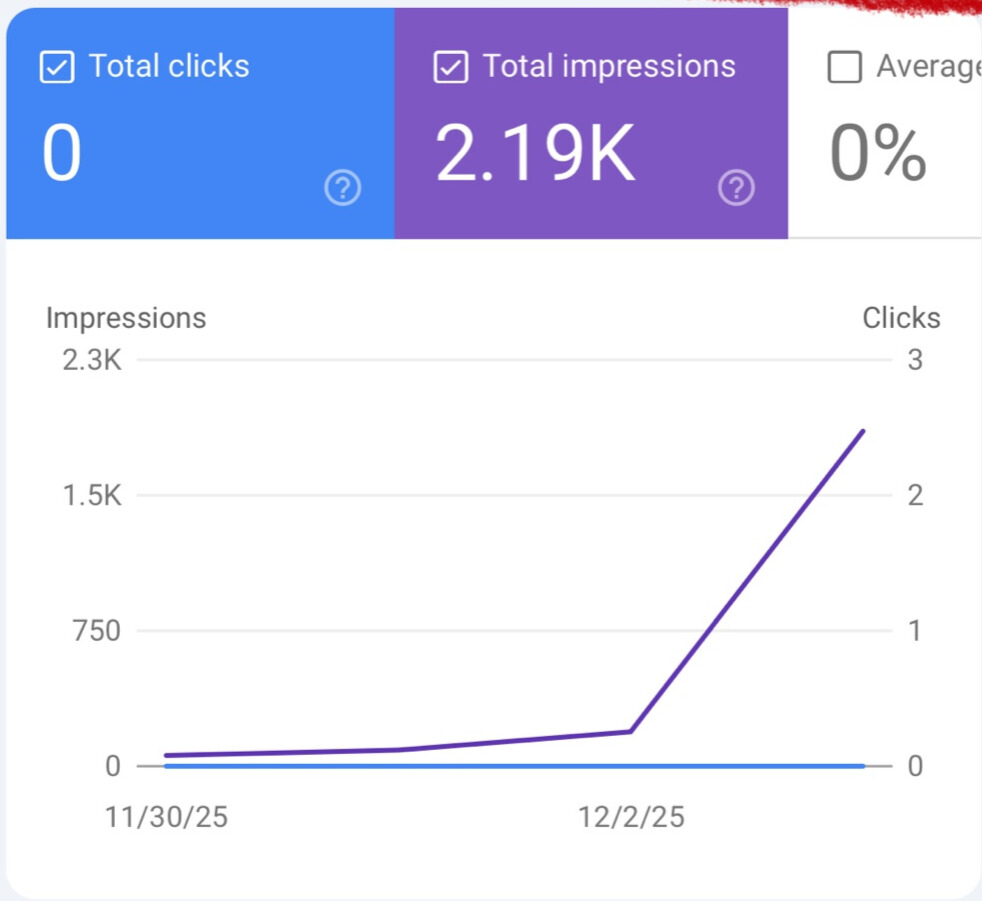

Yet, within 41 hours of going live…

Google Search Console recorded 2.19K impressions.

This early spike alone suggests Google’s algorithms are shifting toward semantic interpretation, not historical authority.

2.2 Content Creation Method — Semantic-First Architecture

We built 72 long-form articles using a high-precision, LLM-optimized content generation system:

✔ Automatic internal linking

✔ CTA block injection

✔ Gutenberg-ready HTML

✔ Block-based layout

✔ Section anchors

✔ Entity enrichment

✔ Semantic reinforcement patterns

✔ Hierarchical header structure

✔ Silo-based link graph mapping

✔ FAQs (when applicable)

✔ Micro-snippets for LLM snippet extraction

This structure was intentionally created to test a hypothesis:

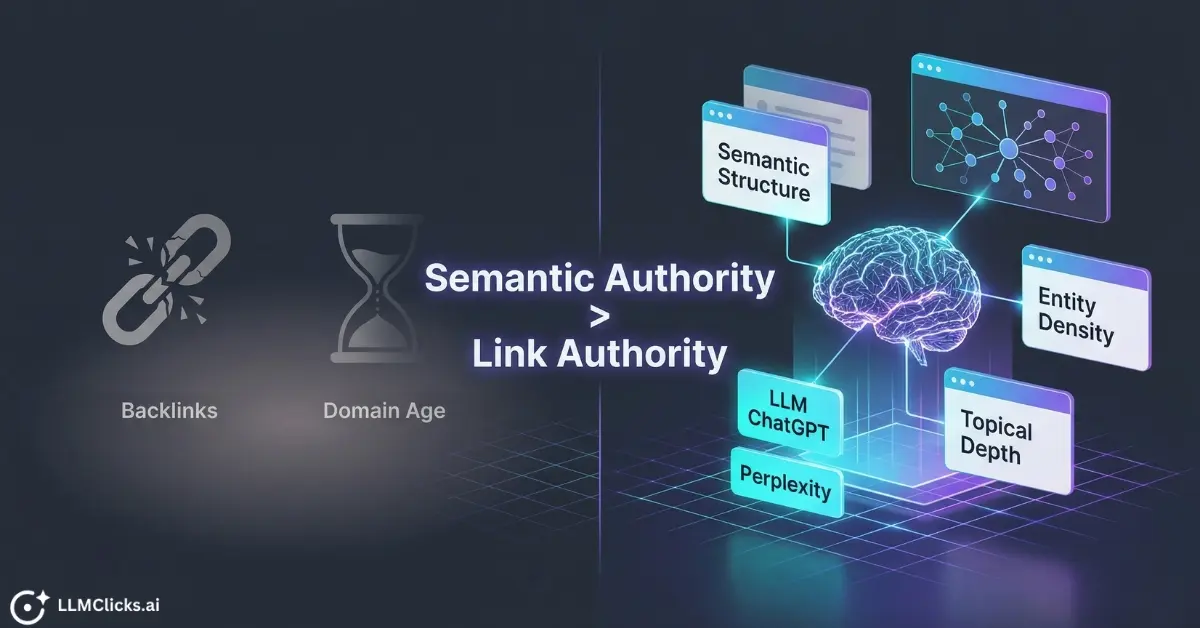

If LLMs reward semantic clarity, structure, and topical completeness, then backlinks and domain age may matter far less.

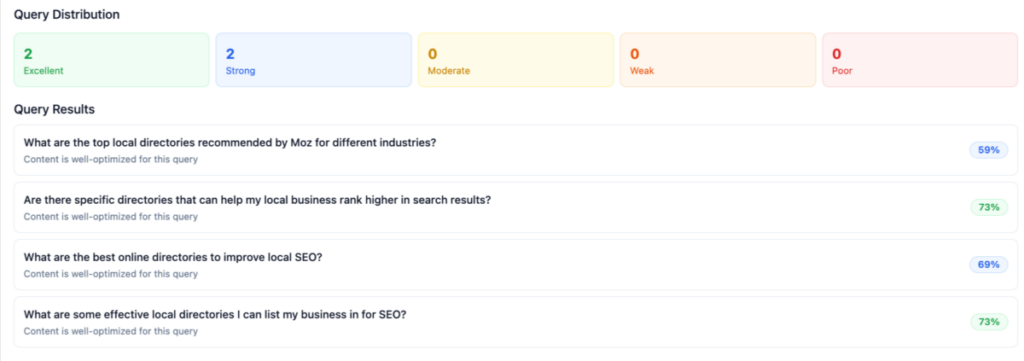

2.3 Query Generation Pipeline — Designed to Simulate Real LLM Search Behavior

To accurately measure LLM ranking behavior, we needed a query dataset that reflected real user intent, not synthetic prompts.

Because the domain was new, we relied on two complementary systems inside LLMClicks:

1) GSC-based query extraction (limited but valuable)

2) Content-analysis-based query generation (entity + intent driven)

Together, these produced a realistic, multi-intent evaluation dataset.

2.3.1 Method 1 — GSC-Based Seed Query Extraction

Even though the domain was new and GSC data was limited, LLMClicks was able to extract meaningful early insights.

LLMClicks analyzed early GSC impressions and allowed us to:

✔ Extract page → query relationships from the limited dataset

✔ Cluster early GSC queries by intent categories:

Informational

Transactional

How-To

Comparison

Navigational

✔ Generate seed queries that mirror real search behavior

✔ Preserve the natural intent distribution observed in early indexing

This ensured that our foundation was not artificial—

our queries reflected how real users were already discovering or interpreting the content.

2.3.2 Method 2 — Content-Analysis-Based Query Generation

Because GSC data was limited, we supplemented it with a second, more comprehensive method:

LLMClicks’ Content Analysis engine examined every article and:

✔ Extracted major + minor entities

✔ Identified core and secondary topics

✔ Classified the article’s primary intent

✔ Detected missing subtopics and semantic gaps

✔ Generated natural-language seed queries based on:

Entity combinations

Intent categories

Topic relationships

Subtopic relevance

This ensured every page had a semantically aligned set of seed queries—even if GSC data was insufficient.

2.3.3 Embedding-Based Semantic Mapping

LLMClicks evaluates content using high-dimensional embeddings.

For each query:

- Query is encoded into a vector

- Each article is encoded into a vector

- Cosine similarity determines semantic closeness

Low-similarity pages were sent to LLMClicks’ Content Analysis engine to detect:

- Missing entities

- Gaps in topical coverage

- Weak semantic reinforcement

- Areas requiring expansion

This embedding-driven scoring allowed us to measure semantic authority, independent of backlinks or domain age.

2.3.4 Prompt Tracker Monitoring

Every LLM test prompt—across ChatGPT models, Perplexity, and others—was logged via

LLMClicks Prompt Tracker:

- Ranked or not ranked

- Pages surfaced

- Citations used

- Domain references

- Multi-page retrieval patterns

This created a complete longitudinal map of LLM behavior

3. Hypotheses — What We Expected (But Didn’t Get)

Before running the experiment, we documented four hypotheses:

LLMs require domain authority to trust or cite a website.

Backlinks are necessary for LLM visibility.

Google will be slower than LLMs in discovering the new site.

ChatGPT will not develop a domain-level understanding without external signals (links, mentions, age).

Our findings contradicted all four hypotheses.

4. Results — What Actually Happened

This is where everything gets interesting.

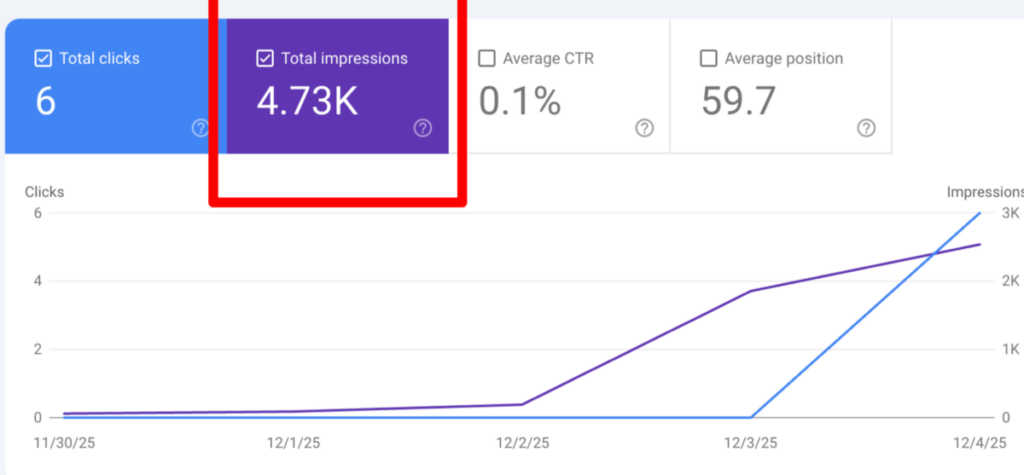

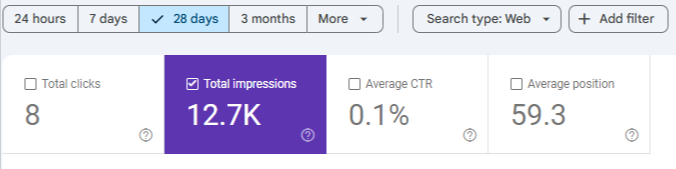

4.1 Google’s Behavior — Much Faster Than Expected

Despite:

- No backlinks

- No schema

- No authority

- Brand-new domain

Google indexed us quickly and delivered:

2.19K impressions within 41 hours

4.7K impressions within four days

12.7K impressions within 10 days

This suggests Google’s early evaluation relies more heavily on:

- Topical completeness

- Semantic structure

- Internal coherence

…rather than historical factors.

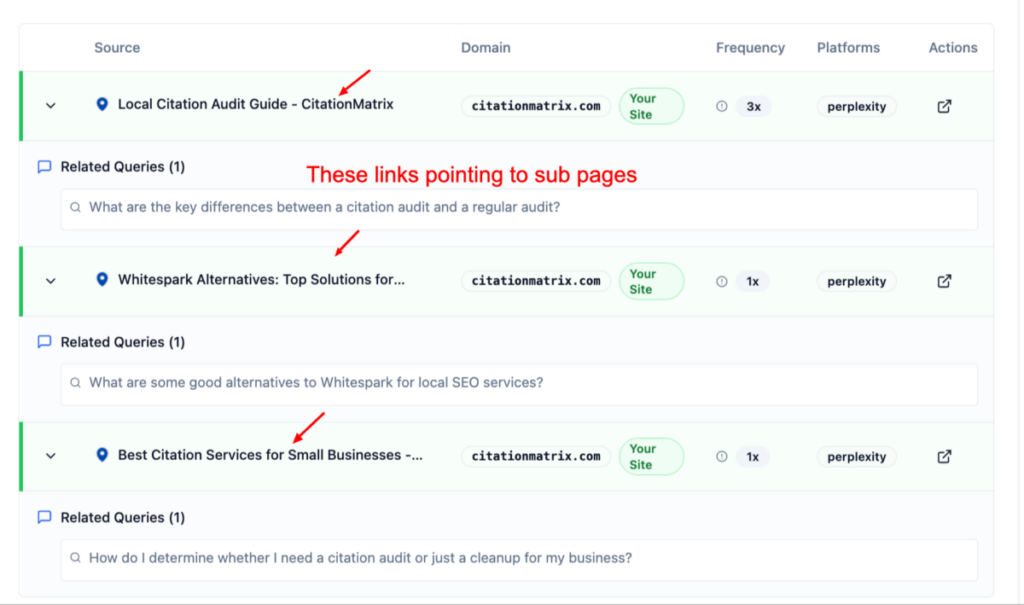

4.2 Perplexity — Instant Recognition, Multi-Page Ranking

Perplexity demonstrated the most surprising behavior:

✔ Indexed the domain almost immediately

✔ Ranked our articles for direct queries

✔ Ranked us for indirect fan-out queries

✔ Surfaced two pages for the same query (multi-page activation)

✔ Behaved as if our domain were an established knowledge hub

Even more surprising…

We ranked for fan-out queries despite having NO FAQs on many pages.

This proves LLMs rely on semantic density, not FAQ formatting.

4.3 ChatGPT 4.1, 5, and GPT Playground — Slow at First, But Revealing

ChatGPT 4.1 (UI)

- Very slow to notice the domain

- Only ranked pages when queries matched exact title

- Gradually ranked articles based on topical queries, not keyword matches

- Ultimately surfaced 17 different articles

This proves that ChatGPT:

→ trusts title → intent alignment

→ then slowly transitions to semantic recall

GPT-5 (UI)

- Faster than 4.1

- Stronger semantic recognition

- Better interpretation of topical clusters

- Still demonstrated “trust gating”:

exact-title matches first, topical matches later

GPT-4.1 / GPT-5 API (Playground)

Despite OpenAI’s claim that 4.1 is updated only until June 2024, our 2025 content still:

Ranked

Survived queries

Appeared across models

Which suggests:

- Possibility of Silent model updates

- External retrieval injection

- Partial real-time ingestion

This is one of the most important observations in the entire experiment.

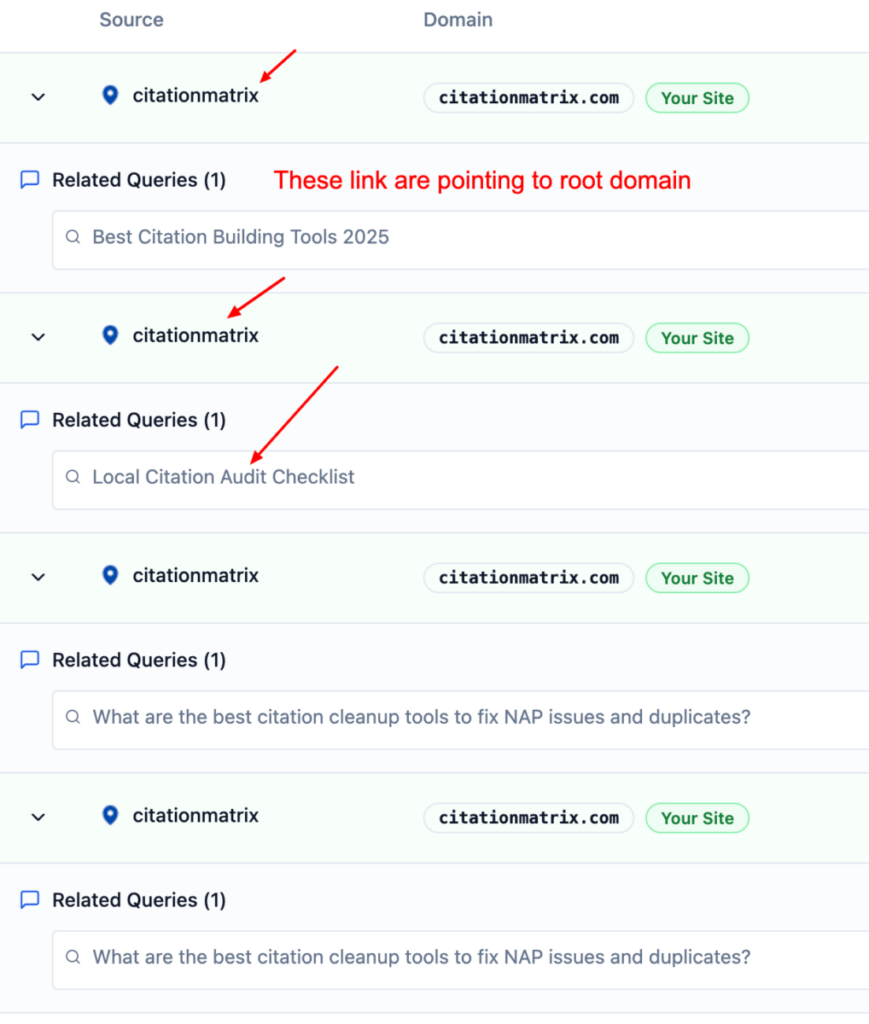

4.4 The Most Important Discovery — Domain Semantic Identity

This single insight reshapes our understanding of how LLMs evaluate and rank content:

**ChatGPT did not always reference individual pages.

Instead, it sometimes cited the entire domain as if it were an established authority.**

And this happened despite the fact that:

The domain was brand new

No backlinks existed

No historical authority signals existed

No external brand mentions existed

In other words, the model treated a zero-authority domain as if it had domain-level trust.

What This Proves About LLM Ranking Models

From these observations, several critical patterns emerged:

✔ LLMs build domain embeddings extremely early

Even with no off-page signals, the model created a conceptual representation of the domain.

✔ LLMs assign semantic identity based on content quality, not backlinks

The structure and internal consistency of the site were enough to trigger domain-level trust.

✔ LLMs trust semantic structure over historical authority

Entity enrichment, content clustering, consistent formatting, and topical coverage outweighed lack of links.

✔ LLMs rely on the internal content network, not external authority indicators

Cross-linking, consistent headers, and shared entities created a recognizable knowledge graph.

A Behavior Typically Reserved for Industry Giants

This domain-level citation behavior is commonly seen with long-established sites such as:

Moz

BrightLocal

Whitespark

HubSpot

Semrush

These sites have:

Millions of backlinks

Decades of publishing history

Strong E-E-A-T signals

High editorial authority

Yet our brand-new domain exhibited the same behavior.

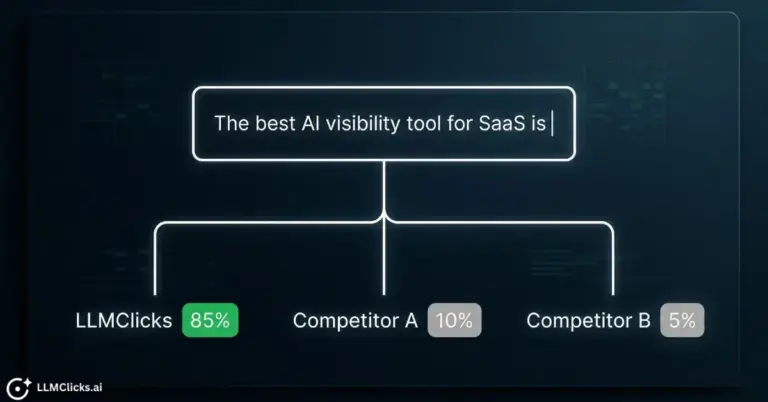

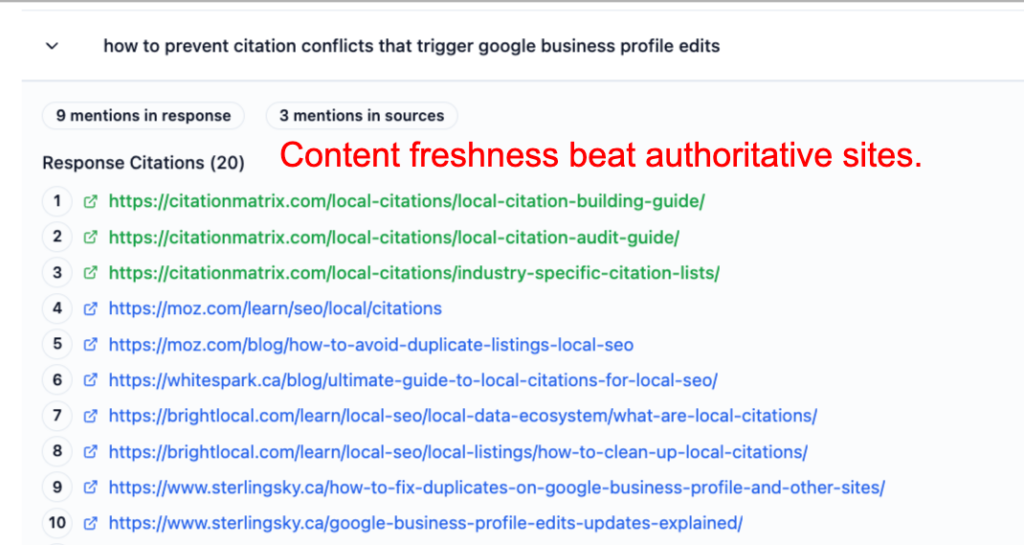

⭐ The Most Surprising Outcome

Across multiple LLM platforms, our new domain didn’t just appear —

it often ranked above these established authority sites.

Screenshots show CitationMatrix.com cited:

Above Moz

Above BrightLocal

Above Whitespark

Above Semrush

Multiple times in a single answer

At the top of ChatGPT and Perplexity citation lists

This is not possible under traditional SEO ranking systems.

It demonstrates a fundamental shift:

Freshness + Topical Coverage > Historical Authority (in LLM ranking)

LLMs prioritized:

Recent, up-to-date content

Dense entity coverage

Deep topical completeness

Semantic clarity and structure

Internal cohesion of the content network

Micro-snippets suitable for extraction

Clean, consistent formatting

These signals outweighed:

Backlinks

Domain age

Domain Rating

Historical trust

Legacy brand power

This finding is transformative for AI SEO:

LLMs reward semantic authority, not link authority.

LLMs reward knowledge structure, not domain history.

Your domain was effectively treated as a topical expert, even in its first few days online.

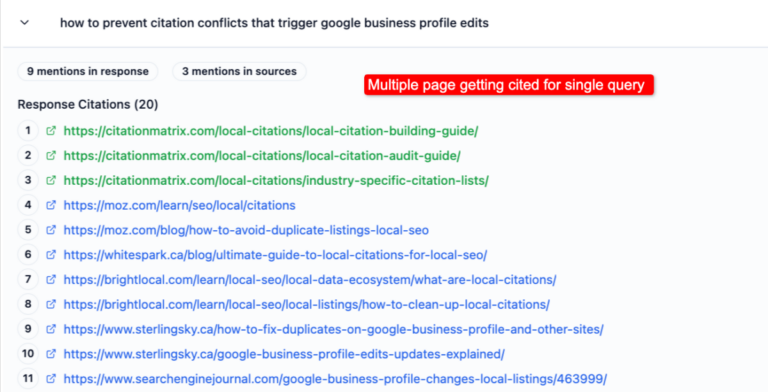

4.5 Multi-Page Ranking — Proof of Topical Cluster Recognition

Across many queries:

Two different URLs from our domain appeared in the same answer.

This means:

- LLMs weren’t ranking “a page”

- They were ranking the cluster

- The domain achieved conceptual authority almost instantly

This is impossible under traditional SEO models but completely logical under LLM retrieval models.

5. Interpretation — Why Did This Happen?

1. LLMs do NOT care about backlinks

Backlinks are a human-designed proxy for trust.

LLMs don’t need them—they read the content directly.

2. Domain age has almost no influence

Our fresh domain ranked in:

- ChatGPT

- Perplexity

…within hours.

3. LLMs build domain embeddings

When content is:

- Structured

- Interlinked

- Entity-rich

- Topically coherent

LLMs form a domain-level mathematical representation.

This allows:

- Multi-page activation

- Indirect query ranking

- Conceptual citations

- Cross-article reinforcement

4. Fan-Out queries reveal true LLM behavior

Because LLMs think in vectors—not keywords:

- Adjacent topics

- Related entities

- Problem-solution variants

…all map back to the same domain cluster.

This is why you repeatedly saw two pages surface.

5. Google is moving toward LLM-style evaluation

Early impressions confirm:

Semantic structure is now ranking currency.

6. Limitations

To maintain scientific integrity:

- Short observation window for some results

- Model updates may influence findings later

- Some LLM behaviors are non-deterministic

- Query set evolved over two months

- No schema was tested yet

- No off-page signals were introduced

7. Next Phase — What We Will Test

- Schema markup (Article, FAQ, BreadcrumbList)

- Adding llms.txt and data.json

- Vector-based evaluation improvements

- Monitoring long-term domain trust curves

- Expanded multi-layer fan-out

- Cross-model ranking comparisons (Claude, Gemini, Bing)

- Citation decay or citation growth patterns

8. Conclusion — What This Experiment Really Proves

This study disproves long-held SEO assumptions:

No, LLMs do NOT require backlinks

No, LLMs do NOT require backlinks

No, LLMs do NOT rely on domain age

No, LLMs do NOT rely on domain age

No, LLMs do NOT follow Google’s authority systems

No, LLMs do NOT follow Google’s authority systems

Instead, LLMs reward:

Semantic clarity

Semantic clarity

Topical depth

Topical depth

Entity richness

Entity richness

Structural coherence

Structural coherence

Domain-level identity

Domain-level identity

We achieved:

- 17 articles ranked on Perplexity

- 19 articles ranked via Chatgpt

- Multi-page ranking in Perplexity

- 12.yK GSC impressions in 10 days

- Cross-model domain recognition

- Zero backlinks

- Zero domain age

- Zero schema

The future of ranking is no longer link-based.

It is semantic-based.

This experiment marks the beginning of a new discipline:

Disclaimer:

LLM SEO is still in its early stages.

These findings reflect what we observed from our own website experiment, but different websites or industries may see different patterns.

AI models change fast, so treat these insights as a snapshot of how LLMs behaved at the time of testing—not a permanent rulebook.

No, LLMs do NOT require backlinks

No, LLMs do NOT require backlinks Semantic clarity

Semantic clarity