By Shripad Deskhmukh, Founder at LLMClicks.ai

Published on: 02-March-2026 | 2300 words | 11-minute read

Traditional SEO audits focus heavily on Googlebot. But what happens when GPTBot, ClaudeBot, and PerplexityBot try to parse your JavaScript-heavy SaaS website?

Most technical SEO professionals are flying blind right now. They are optimizing for traditional indexing while completely ignoring the fact that Large Language Models extract and synthesize data differently. If your site architecture is not machine-readable, your brand will not be cited.

This guide breaks down the exact technical infrastructure required to make your website AI-ready. We will cover advanced rendering logic, robots.txt configurations, schema mapping, and how to instantly spot code gaps using automated tools.

Before you audit your code, you must understand the crawler you are optimizing for. AI bots do not behave like traditional search engine spiders.

Googlebot crawls the web to build a massive index of links, ranking pages based on relevance, authority, and traditional user intent. Large Language Models operate on a fundamentally different architecture.

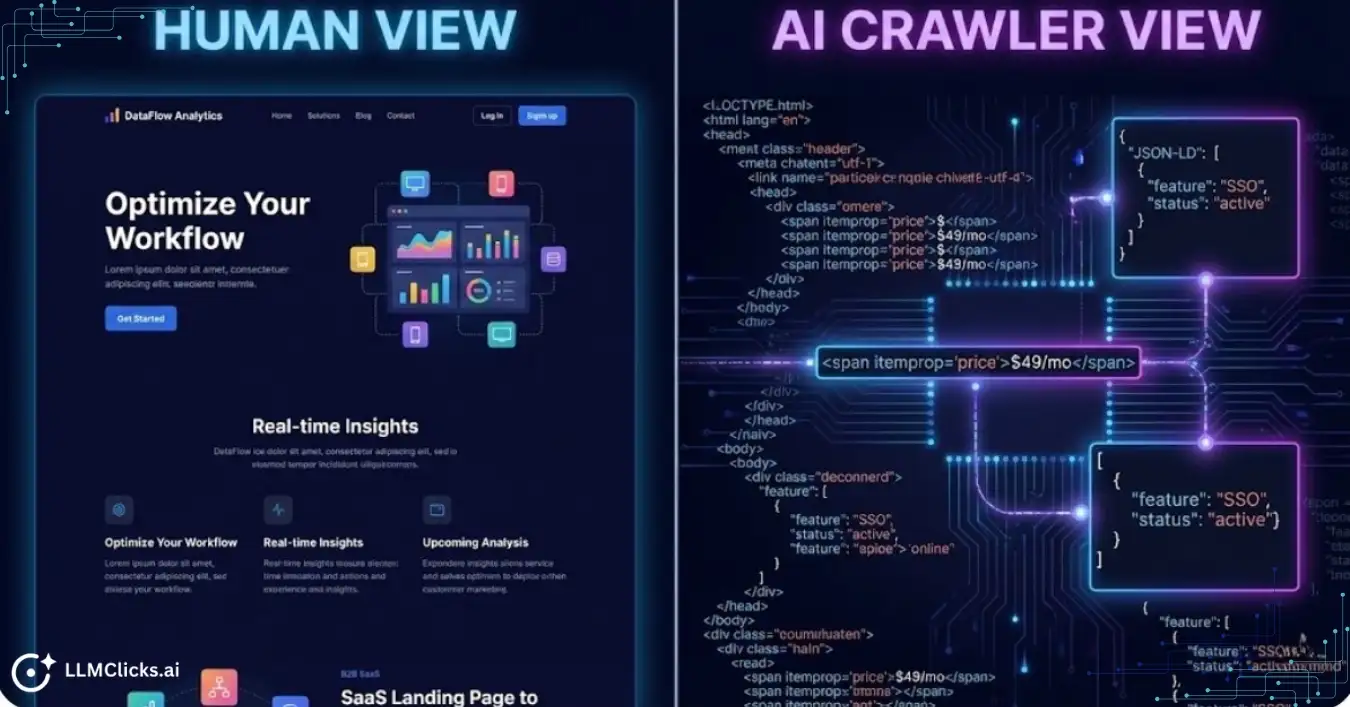

Large Language Models operate on a fundamentally different architecture. They do not retrieve links. They synthesize answers. When an AI crawler visits your site, it is looking for structured entities, factual claims, and semantic relationships to feed into its training data or real-time Retrieval-Augmented Generation (RAG) system. If your data is buried under complex code, the AI cannot extract it.

We are moving past basic chatbots into the era of agentic AI search. These AI agents autonomously navigate websites to complete complex tasks and answer multi-layered user prompts.

If an AI agent cannot easily parse your pricing page or feature list, it will simply move to your competitor’s site. You are no longer just optimizing for human readability. You must optimize for machine extractability.

A beautiful, high-converting website means absolutely nothing if OAI-SearchBot cannot parse your Document Object Model (DOM).

The biggest bottleneck for AI visibility today is JavaScript rendering. Many SaaS sites rely heavily on Client-Side Rendering (CSR) to load dynamic content. While Googlebot has improved its ability to execute JavaScript, relying solely on CSR is a massive risk for AI crawlers.

For optimal SEO and user experience, you must prioritize server-side rendering (SSR), static rendering, or a hybrid hydration approach. While some bots can eventually render JavaScript, pre-rendered content ensures immediate indexing and flawless data extraction across all potential AI crawlers and devices.

Do not force an LLM bot to waste its crawl budget rendering your JavaScript. Serve the fully rendered HTML upfront.

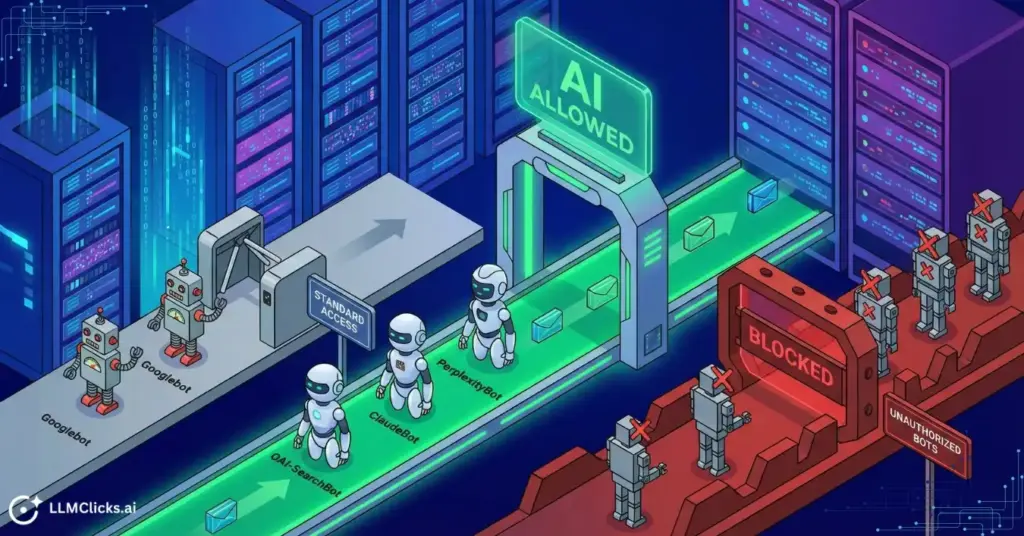

Your content cannot be synthesized if it cannot be crawled. Technical accessibility is the absolute foundation of Generative Engine Optimization. You must ensure your server architecture welcomes AI crawlers rather than blocking them entirely.

Many SaaS companies accidentally block AI crawlers. During the early days of ChatGPT, security teams panicked and added blanket disallow rules for all AI bots. Today, that is a massive competitive disadvantage.

You must explicitly allow the crawlers that power major answer engines. Check your robots.txt file immediately. Ensure you are not blocking these specific user agents:

The rule is simple: if these bots cannot access your pricing or feature pages, they will synthesize answers using your competitors’ data.

A new technical standard is emerging specifically for AI crawlers. It is called the llms.txt file.

While robots.txt tells bots where they can go, llms.txt tells them exactly what to read. Placed in the root directory of your site, this markdown file acts as a stripped-down, machine-readable directory. It points LLMs directly to your most important documentation, API references, and product specs without forcing them to render complex HTML or CSS.

If you run a technical SaaS product, deploying an llms.txt file drastically reduces the crawl budget required for AI models to understand your core features.

AI search engines prioritize fast, accessible websites. LLM bots have limited computational resources allocated per domain. If your site takes ten seconds to load due to bloated JavaScript or unoptimized images, the bot will abandon the crawl.

Core Web Vitals directly impact your AI crawl priority. You must optimize your Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS). A fast server response time guarantees that AI models can ingest your structured data before their session times out.

Large Language Models do not read paragraphs like humans do. They parse deterministic data. If you want an AI to quote your exact pricing or feature list, you must feed it structured data.

When an AI model synthesizes a comparison between two tools, it looks for certainty. Unstructured paragraph text requires the model to guess the context. Schema markup eliminates the guesswork.

By wrapping your data in JSON-LD structured markup, you explicitly define the entities on your page. You make your website machine-readable. This is the single highest-ROI technical fix you can implement for AI visibility.

For B2B SaaS companies, the SoftwareApplication schema is mandatory. This feeds your exact product details, category, and requirements directly into the AI’s knowledge graph.

Here is the exact JSON-LD structure you must implement on your homepage or core product page:

JSON

{

“@context”: “https://schema.org”,

“@type”: “SoftwareApplication”,

“@id”: “https://llmclicks.ai/#software”,

“name”: “LLMClicks.ai”,

“alternateName”: “LLM Clicks”,

“url”: “https://llmclicks.ai/”,

“description”: “LLMClicks.ai helps your brand appear in ChatGPT, Google AI, Bing, and Perplexity answers. Track mentions, analyze citations, and optimize your site with a 120-point audit, built for agencies and in-house teams.”,

“applicationCategory”: “BusinessApplication”,

“applicationSubCategory”: “AI Visibility & SEO Analytics”,

“operatingSystem”: “Web-based”,

“browserRequirements”: “Requires JavaScript. Compatible with modern web browsers.”,

“softwareVersion”: “1.0”

}

This code snippet prevents the AI from hallucinating your category or core value proposition. It definitively states exactly what your software does and who it is for.

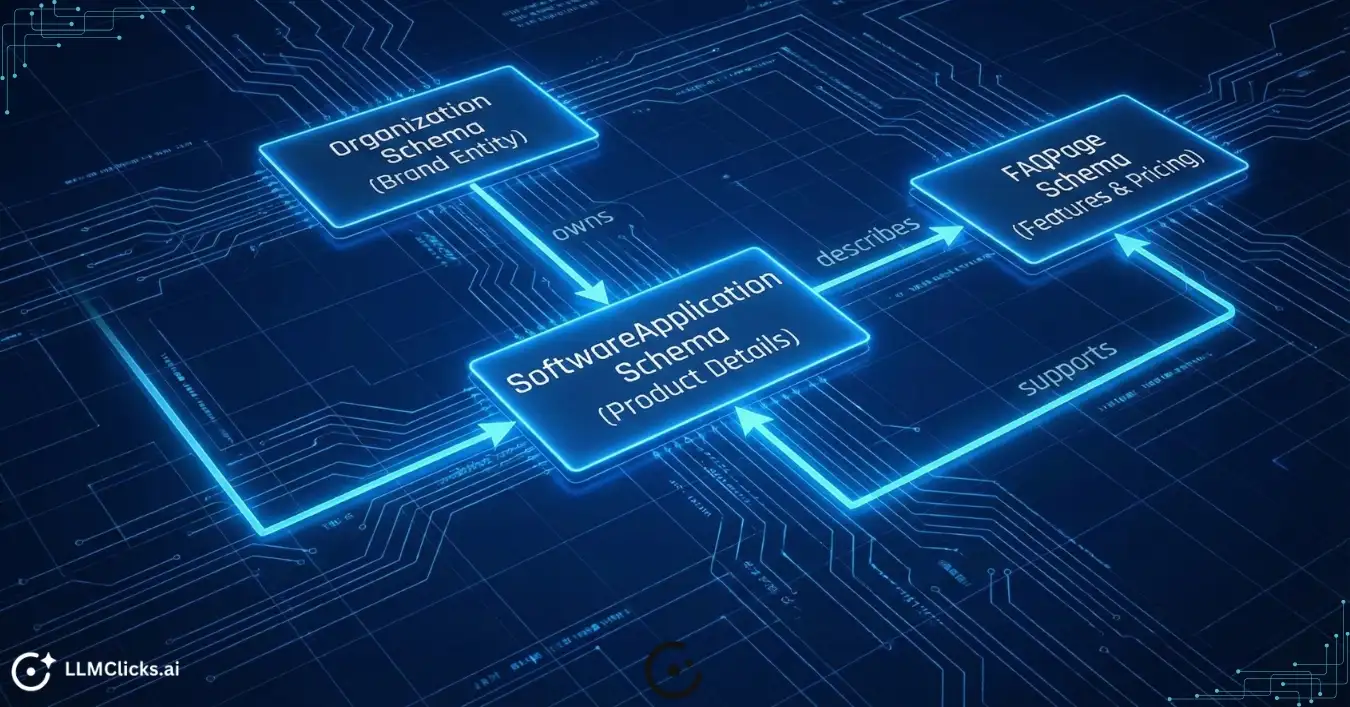

Do not just drop isolated schema tags on random pages. You must build a technical knowledge graph.

Use the @id property to link your entities together. Your SoftwareApplication schema should reference your Organization schema. Your FAQPage schema on your pricing page should explicitly reference the software product it describes.

When you nest these entities, you teach the AI how your brand, your product, and your features are all interconnected. This deep semantic relationship is exactly what triggers high-confidence citations in platforms like Perplexity and Gemini.

Great technical accessibility gets the AI crawler onto your page. Great content architecture gets your data extracted. You must format your Document Object Model (DOM) so the AI does not have to work hard to understand your value proposition.

AI models extract data from specific HTML tags. They rely heavily on your semantic HTML structure to determine the hierarchy of information.

If you wrap your section headers in bold paragraph tags instead of proper H2 and H3 tags, the AI crawler loses the context. You must nest your headers chronologically. Every H3 must logically support the H2 above it. Clean, semantic HTML acts as a roadmap for the AI to navigate your complex product features.

When optimizing for Generative Engine Optimization, you must flip your content structure upside down. Traditional SEO hides the answer at the bottom of the page to increase dwell time. AI SEO requires the inverted pyramid structure.

Put the absolute most important, constraint-based answer at the very top of the page. Use a TL;DR summary explicitly formatted in a bulleted list. AI models are highly biased toward extracting data from <ul> and <ol> HTML tags because they represent concise, factual statements.

If you are building a competitor comparison page, do not write five paragraphs explaining why your software is better.

LLMs extract structured table data exponentially faster than paragraph text. Build a clean, accessible HTML <table> that directly compares your pricing, SLA terms, and integrations against your competitors. When a user asks Perplexity to compare two tools, the AI will pull your table data directly into its synthesized answer.

AI models do not just synthesize data. They weigh it against trust signals. If your website makes a technical claim, the AI looks for consensus across the web before citing you as the source.

You must connect your isolated web properties into a cohesive technical knowledge graph. Use the sameAs property in your Organization schema to link your website to your verified Wikipedia page, your G2 profile, and your high-authority social channels.

When you explicitly map these relationships in your code, you prove to the AI that your brand is a recognized, authoritative entity in the SaaS space.

Inconsistent data is the fastest way to lose an AI citation. If your website lists your software pricing at $49 per month, but your G2 profile says $39, the AI model gets confused. It will often hallucinate a middle number or skip your tool entirely to avoid providing inaccurate information.

Audit your Name, Address, and Product details across the entire web. Ensure your core entities are perfectly aligned across every directory, review site, and partner integration page.

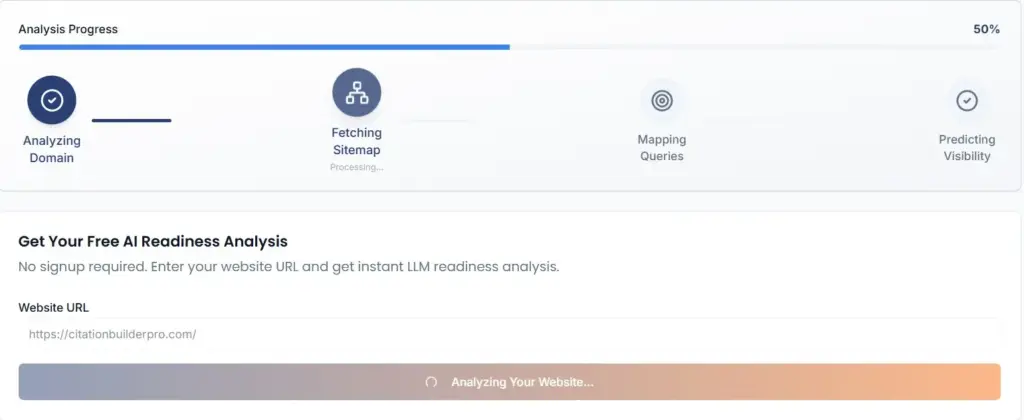

You now understand the theory behind AI readiness. But auditing a 500-page SaaS website manually is impossible.

Code changes daily. Marketing teams update pricing tables. Developers push new JavaScript frameworks. You cannot rely on manual checklists to verify if OAI-SearchBot can still parse your product schema after a site redesign.

You need enterprise-grade automation to spot code gaps instantly.

We built a dedicated tool to execute this exact technical workflow. You can run a comprehensive technical scan right now using our free LLMClicks AI Readiness Analyzer.

While this guide teaches you the architecture, the Analyzer actually executes the technical crawl. You simply enter your URL. The tool crawls your code like an LLM, spots specific AI-readiness gaps, validates your JSON-LD schema markup, and checks your rendering logic. It provides an instant diagnosis so your engineering team knows exactly what to fix today.

Once your technical foundation is secure, you must build the infrastructure to track your success.

You need to know if your technical fixes are actually driving pipeline. Configure Google Analytics 4 to track referral traffic from the major answer engines. Set up custom channel groupings for referral sources containing “https://www.google.com/search?q=chatgpt.com”, “perplexity.ai”, and “claude.ai”. This allows you to attribute direct revenue to your Generative Engine Optimization efforts.

AI models update their training data constantly. A prompt that cited your brand yesterday might cite a competitor tomorrow. You must use a platform like LLMClicks.ai to track your Share of Voice continuously. Set up hallucination alerts so your team is notified the second an AI platform drops your citation or misquotes your pricing.

To operationalize this audit, hand this exact 30-day sprint timeline to your engineering and growth teams.

Ans: An AI visibility checker simulates how Large Language Models parse your website. It analyzes your HTML structure, checks your schema markup, and verifies if your content is easily extractable by bots like GPTBot and ClaudeBot.

Ans: Focus your technical audits on the bots that drive B2B SaaS research. Ensure your site is fully accessible to OAI-SearchBot (ChatGPT), PerplexityBot (Perplexity), and Google-Extended (Gemini and AI Overviews).

Ans: You should run an automated AI readiness scan every time your development team pushes a major code update, changes the rendering logic, or alters the core schema markup on your money pages. At a minimum, run a full site audit quarterly.

© LLMClicks.ai All Right Reserved 2026.