Web crawlers have governed how content gets discovered and indexed since the early days of the internet. Search engines like Google and Bing built their indexes entirely on crawler data, and webmasters learned to manage crawler access through robots.txt as a standard part of site maintenance.

AI crawlers introduced a new dimension to this established practice. When OpenAI launched GPTBot in August 2023, it became the first widely deployed crawler whose purpose was not to build a search index but to collect training data for a large language model. The distinction matters because the consequences of blocking it differ significantly from the consequences of blocking a traditional search crawler.

The debate around AI crawler blocking has grown considerably since then. 25% of the top 1,000 websites now block GPTBot, up from 5% in early 2023. The reasons vary: intellectual property concerns, privacy compliance, content monetization protection, and, in some cases, a misunderstanding of what blocking actually does.

This guide covers the technical facts behind that decision. It explains what each major AI crawler does, how blocking affects AI citation rates and search visibility, which site types have legitimate reasons to block, and how to write a precise robots.txt configuration that protects private paths without removing public commercial content from AI discovery.

The guide covers eight active AI crawlers across OpenAI, Anthropic, Perplexity, Google, and Microsoft. Each has a distinct function, and a robots.txt policy written for one does not automatically apply to the others.

What Is GPTBot?

GPTBot is the web crawler operated by OpenAI. It reads publicly accessible web pages to supply content for ChatGPT’s underlying language models and its real-time search features.

Its user-agent string appears in HTTP requests as:

Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; GPTBot/1.1; +https://openai.com/gptbot

GPTBot operates within the same framework as traditional search engine crawlers: it respects robots.txt directives, cannot access paywalled or authenticated content, and does not influence traditional Google search rankings. OpenAI’s documentation confirms these behaviors explicitly.

As of early 2026, 25% of the top 1,000 websites now block GPTBot, up from 5% in early 2023. The decision carries measurable SEO consequences in either direction, which this guide covers in full.

The Complete AI Crawler Index

GPTBot is one of at least eight active AI crawlers across the major platforms. Each serves a different function. Configuring robots.txt accurately requires understanding all of them.

Crawler | Operator | Primary Function | Effect of Blocking |

GPTBot | OpenAI | Training data for GPT models + real-time search | Removes brand from model weights and ChatGPT citations |

OAI-SearchBot | OpenAI | Real-time retrieval for ChatGPT search results | Removes pages from live ChatGPT search responses |

ChatGPT-User | OpenAI | Browsing agent during ChatGPT conversations | Blocks ChatGPT from reading pages during a chat session |

ClaudeBot | Anthropic | Training data for Claude models | Removes brand from Claude’s trained knowledge |

anthropic-ai | Anthropic | Real-time fetching for Claude responses | Prevents Claude from citing your content in answers |

PerplexityBot | Perplexity | Indexing for Perplexity citations | Removes pages from Perplexity answers entirely |

Google-Extended | Training data for Gemini models | Reduces Gemini’s awareness of brand and product data | |

bingbot | Microsoft | Bing search index + Microsoft Copilot | Blocking affects both Bing rankings and Copilot responses |

OpenAI’s documentation confirms that GPTBot and OAI-SearchBot are treated as separate crawlers with independent robots.txt rules. A directive targeting GPTBot does not automatically apply to OAI-SearchBot.

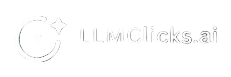

How AI Crawlers Differ from Search Engine Crawlers

Traditional crawlers like Googlebot examine a website and add it to a search index. When a user searches, the engine retrieves ranked results from that index.

AI crawlers use the same technical mechanism but serve a different downstream purpose. Instead of building a retrievable index, they collect content that is processed into training datasets for large language models. Once incorporated into a training run, that information becomes part of the model’s parametric knowledge, meaning it influences responses without any retrieval step.

This distinction has a practical consequence: being blocked from an index is a recoverable condition. A webmaster can remove a blocking rule and Googlebot will recrawl within days. Being absent from a model’s training data is not immediately recoverable. Training runs happen on irregular schedules that AI companies do not publicly disclose. A brand absent from a training cycle may remain underrepresented in that model version for 12 to 24 months until the next cycle.

Training Crawlers vs Inference Crawlers

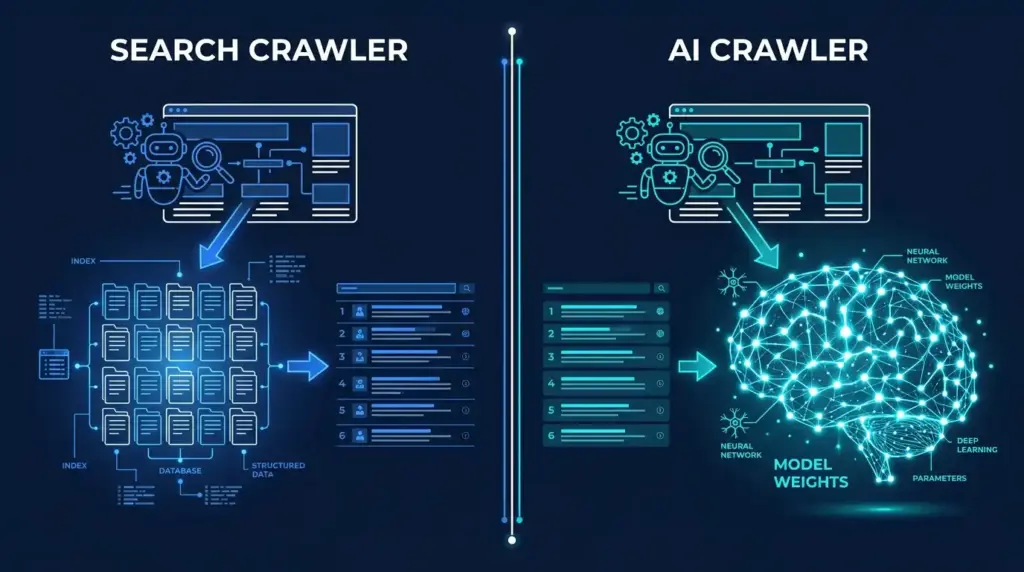

AI crawlers operate in two functionally distinct modes. Understanding the difference is the most important prerequisite for writing an accurate robots.txt policy.

Training Crawlers

Training crawlers collect content for inclusion in the model’s next training cycle. The content they scrape becomes part of the dataset that shapes the model’s weights during training. After training, those weights are fixed until the next training run.

Key training crawlers: GPTBot, ClaudeBot, Google-Extended

Consequence of blocking: your brand’s features, pricing, and category associations are not encoded into the model’s weights during the blocked period.

Inference Crawlers

Inference crawlers fetch content in real time during a user’s active conversation. They supplement the model’s trained knowledge with current web data to answer questions that require up-to-date information.

Key inference crawlers: OAI-SearchBot, ChatGPT-User, anthropic-ai, PerplexityBot

Consequence of blocking: your pages are excluded from real-time AI search results and citation responses, even if the model already has some training-based knowledge of your brand.

Why Both Matter

Blocking training crawlers affects long-term brand representation in model weights. Blocking inference crawlers affects immediate citation eligibility in AI search responses. Blocking both, which a blanket Disallow: / directive accomplishes, eliminates both channels simultaneously.

SEO Consequences of Blocking

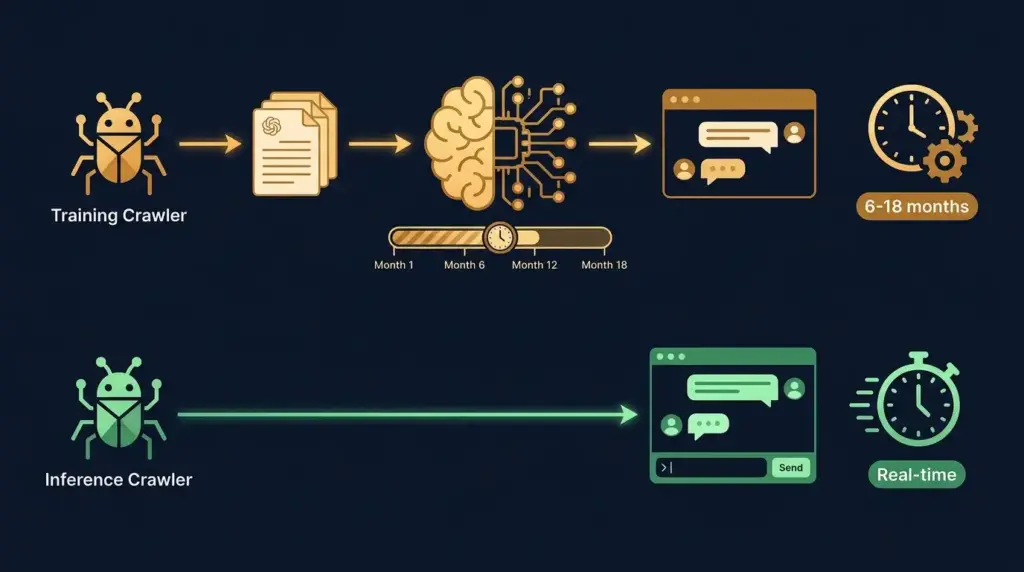

Effect on AI Citation Rates

Research from Princeton University’s Generative Engine Optimization study provides the most cited data on this question. Pages with authoritative citations appeared in 40% more generative responses, and pages containing specific statistics saw a 37% lift in AI citation rates. Pages that are blocked from crawling cannot accumulate either signal.

Content updated within 30 days receives 3.2x more ChatGPT citations than stale content, and sites with strong referring-domain profiles average 8.4 citations per AI-generated response. Both advantages require crawler access to function.

Effect on Google Search Rankings

Blocking GPTBot or other AI crawlers has no direct effect on Google search rankings. These are entirely separate systems. GPTBot and Googlebot are operated independently and their findings are processed through different pipelines.

The risk of indirect harm exists if a webmaster uses a blanket User-agent: * directive intending to block AI crawlers, which would also block Googlebot. This is a configuration error, not an inherent consequence of blocking AI crawlers specifically.

Effect on Competitive Positioning

Sixty-one percent of enterprise sites use a hybrid robots.txt policy that allows public content while blocking sensitive paths. Sites that implement a blanket block may find competitors with open configurations accumulating citations in AI-generated recommendations over time.

No Effect On

- Traditional Google, Bing, or DuckDuckGo search rankings (assuming Googlebot and bingbot remain unblocked)

- Website performance or Core Web Vitals

- Paid search campaigns

- Email deliverability

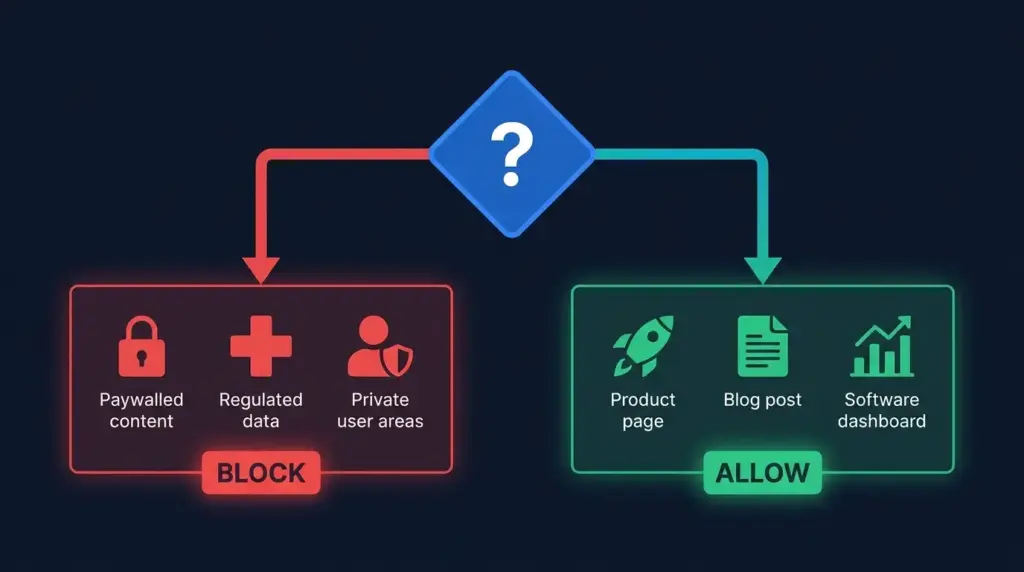

Who Should Block and Who Should Not

The decision depends on the type of content the site publishes and the business model it supports.

Block AI Crawlers When

- Paywalled or subscriber content: Once incorporated into model training data, paywalled articles, courses, or reports can be reproduced in AI responses without the paywall being presented to the user. The New York Times sued OpenAI in December 2023 over paywalled article reproduction in ChatGPT outputs.

- Regulated or sensitive content: Healthcare, financial advisory, and export-controlled content carries liability risk if reproduced in AI contexts without proper disclaimers. 68% of healthcare organizations have experienced data exposure incidents from misconfigured endpoints.

- Private or authenticated areas: Application dashboards, account settings, checkout pages, and API endpoints should always be blocked regardless of your general policy on AI crawlers.

- Proprietary research: Unique datasets, benchmark studies, or research that constitutes the core commercial value of the site may warrant training crawler blocks while keeping inference crawlers open.

Allow AI Crawlers When

- Public marketing content: Product pages, pricing pages, feature documentation, and company information benefit from AI crawler access. This content is already publicly visible; the only question is whether AI systems can read and cite it.

- Blog and knowledge base content: Informational content designed to demonstrate expertise benefits from AI citation. The primary purpose of this content type is reach and authority, both of which AI citation supports.

- B2B SaaS companies: Buyers in B2B software categories increasingly use AI tools during research phases. Brands absent from AI-generated comparisons and recommendations lose consideration at the top of the funnel before a sales conversation begins.

- E-commerce product pages: Product discovery in AI search is an emerging channel. Blocking AI crawlers from product pages removes the brand from this channel entirely.

The Hybrid Approach

61% of enterprise sites use a hybrid robots.txt policy: they allow GPTBot to crawl public content while blocking sensitive directories. This is the most common configuration among large commercial sites and the approach recommended by most technical SEO practitioners.

The Surgical robots.txt Configuration

The goal of a precise robots.txt policy is to protect private and sensitive paths while keeping commercial pages fully accessible to all AI crawlers.

Paths to Always Block

Regardless of your general policy, the following path types should be blocked from all crawlers including AI crawlers:

/app/

/account/

/dashboard/

/private-dashboards/

/checkout/

/cart/

/api/

/internal-kb/

/members/

/admin/

Paths to Always Allow

The following path types should remain accessible to AI training and inference crawlers:

/pricing/

/features/

/product/

/blog/

/knowledge-hub/

/docs/

/about/

/solutions/

Recommended Hybrid Configuration

# OpenAI training crawler

User-agent: GPTBot

Disallow: /app/

Disallow: /account/

Disallow: /dashboard/

Disallow: /checkout/

Disallow: /cart/

Disallow: /api/

Disallow: /members/

Allow: /

# OpenAI real-time search

User-agent: OAI-SearchBot

Disallow: /app/

Disallow: /account/

Disallow: /dashboard/

Disallow: /api/

Allow: /

# OpenAI chat browsing agent

User-agent: ChatGPT-User

Disallow: /app/

Disallow: /account/

Disallow: /dashboard/

Allow: /

# Anthropic training crawler

User-agent: ClaudeBot

Disallow: /app/

Disallow: /account/

Disallow: /checkout/

Disallow: /api/

Allow: /

# Anthropic inference crawler

User-agent: anthropic-ai

Disallow: /app/

Disallow: /account/

Allow: /

# Perplexity

User-agent: PerplexityBot

Disallow: /app/

Disallow: /account/

Disallow: /api/

Allow: /

# Google Gemini training

User-agent: Google-Extended

Disallow: /app/

Disallow: /account/

Disallow: /checkout/

Disallow: /api/

Allow: /

Separating Training and Inference for OpenAI

For sites that want ChatGPT to cite their content in real-time search but do not want their content used for model training, OpenAI’s documentation confirms these can be controlled independently:

# Block training data collection

User-agent: GPTBot

Disallow: /

# Allow real-time search retrieval

User-agent: OAI-SearchBot

Allow: /

This configuration prevents content from entering OpenAI’s training pipeline while keeping pages eligible for citation in ChatGPT’s live search responses.

All Eight Crawlers: Full robots.txt Reference

Crawler | User-Agent String | Operator | Documentation URL |

GPTBot | GPTBot | OpenAI | |

OAI-SearchBot | OAI-SearchBot | OpenAI | |

ChatGPT-User | ChatGPT-User | OpenAI | |

ClaudeBot | ClaudeBot | Anthropic | |

anthropic-ai | anthropic-ai | Anthropic | |

PerplexityBot | PerplexityBot | Perplexity | |

Google-Extended | Google-Extended | ||

Bingbot | bingbot | Microsoft |

Each crawler publishes its IP ranges in documentation. Server log verification using these IP ranges is more reliable than user-agent string verification alone, as user-agent strings can be spoofed while IP ranges require actual infrastructure from the operator.

llms.txt as a Complementary Protocol

llms.txt is an emerging file format placed at yourdomain.com/llms.txt. Where robots.txt is a permission file telling crawlers what they may and may not access, llms.txt is a preference file telling AI systems which content is most relevant to read.

The format uses markdown and contains links to priority pages with brief context:

# Brand Name

> One sentence describing what the organization does and who it serves.

## Core Pages

– [Page Title](/path/): Brief description of what this page contains

– [Pricing](/pricing/): Plan details, feature comparison, pricing tiers

– [Features](/features/): Full feature list and use case descriptions

## Documentation

– [Docs Home](/docs/): Technical documentation and integration guides

## Resources

– [Blog](/blog/): Published articles and guides

llms.txt is not a confirmed ranking factor. No AI platform has published official documentation stating that they use it to prioritize content. Its value is in providing structured, noise-free content representation that may benefit inference-time retrieval systems that parse markdown more efficiently than HTML. It is a low-cost addition to an AI-ready technical configuration.

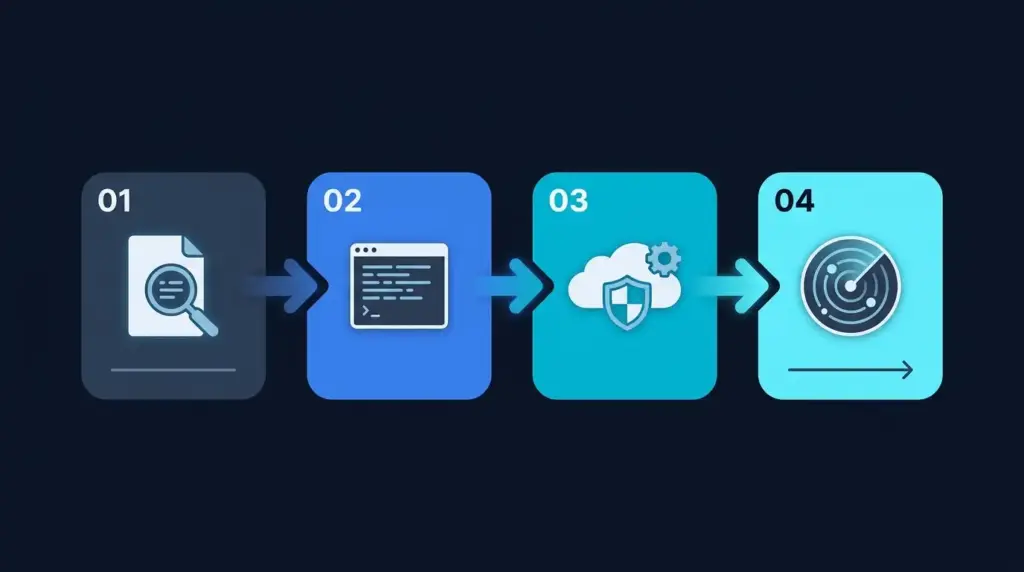

How to Verify Your Current Configuration

Step 1: Check Your robots.txt File

Access yourdomain.com/robots.txt directly in a browser. Patterns that indicate unintended AI crawler blocking:

# Blocks everything including all AI crawlers

User-agent: *

Disallow: /

# Blocks all OpenAI crawlers from entire site

User-agent: GPTBot

Disallow: /

Any Disallow: / directive for an AI crawler user-agent blocks that crawler’s access to the entire public site.

Step 2: Check Server Logs for Crawler Activity

Verify that AI crawlers are actively visiting the site by searching server access logs for these user-agent strings:

- GPTBot

- OAI-SearchBot

- ChatGPT-User

- ClaudeBot

- anthropic-ai

- PerplexityBot

- Google-Extended

Absence of these strings over a 30-day period indicates that crawlers are blocked by robots.txt, filtered by a CDN or WAF, or rate-limited at the server level.

Step 3: Check CDN and WAF Configuration

Cloudflare, Fastly, and AWS CloudFront can block crawlers independently of robots.txt through security rules. Common configurations that unintentionally block AI crawlers include:

- Cloudflare Bot Fight Mode: Enabled under Security > Bots, this challenges automated traffic including AI crawlers. Disable this setting or create bypass rules for verified AI crawler IP ranges.

- Custom WAF rules: Rules that challenge or block requests with non-browser user-agents will block all AI crawlers. Review custom rules for patterns matching bot, crawler, or spider.

- Rate limiting: Aggressive rate limits applied to all non-human traffic can throttle AI crawlers to the point where they stop crawling. Review rate limit thresholds for the IP ranges published by each AI platform.

Step 4: Run a Technical Crawlability Scan

The LLMClicks AI Readiness Analyzer checks crawler accessibility, entity clarity, and schema validation in one automated scan. It flags blocked crawlers, render-blocking scripts that prevent content parsing, and missing structured data.

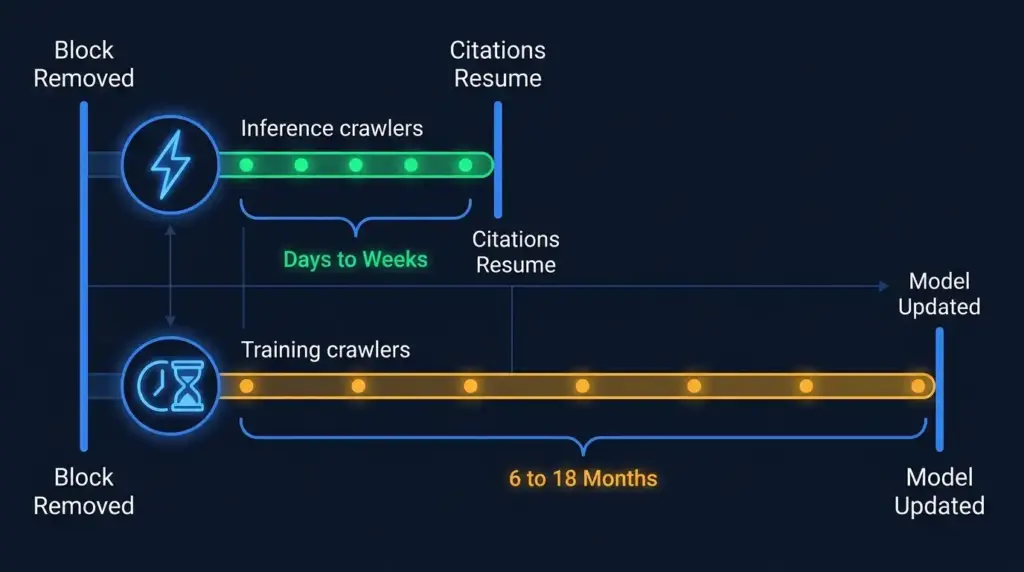

Recovery Timeline After Re-Enabling Crawlers

For sites that have blocked AI crawlers and are reversing that policy, the recovery timeline differs by crawler type.

Inference Crawler Recovery (Fast)

OAI-SearchBot, ChatGPT-User, anthropic-ai, and PerplexityBot fetch content at query time. Once a block is removed:

- Crawlers typically attempt re-access within days

- Pages become eligible for citation in real-time AI search responses within 1 to 4 weeks

- Recovery speed depends on crawl frequency, which varies by domain authority and content update rate

Training Crawler Recovery (Slow)

GPTBot, ClaudeBot, and Google-Extended collect training data for periodic model updates. Recovery is slower because it depends on when the next training cycle processes newly accessible content.

- No public disclosure from OpenAI, Anthropic, or Google on training cycle schedules

- Conservative estimate: 6 to 18 months for newly accessible content to influence model weights in a production release

- The practical approach is to re-enable training crawlers immediately and simultaneously optimize for inference crawler retrieval to generate citations in the near term

Near-Term Strategy During Training Recovery

While waiting for training cycles to incorporate re-enabled content, structuring pages for inference-time retrieval can produce citations from real-time search crawlers faster than training data recovery allows:

- Implement FAQPage schema on pages targeting informational queries

- Use answer-first content structure with direct responses in the first 100 words

- Ensure clean HTML rendering without JavaScript dependencies for core content

- Maintain fresh content update dates, as content updated within 30 days receives 3.2x more ChatGPT citations than stale content

Frequently Asked Questions

Q1. What is GPTBot and what does it do?

Ans: GPTBot is OpenAI’s web crawler used to collect publicly accessible content for ChatGPT model training and real-time search retrieval. It respects robots.txt directives, cannot access authenticated content, and has no effect on traditional Google search rankings.

Q2. Does blocking GPTBot affect Google rankings?

Ans: No. GPTBot and Googlebot are separate systems operated by different companies. Blocking GPTBot has no direct effect on Google rankings. The risk exists only if a webmaster uses a broad User-agent: * directive that inadvertently blocks Googlebot alongside AI crawlers.

Q3. What is the difference between GPTBot and OAI-SearchBot?

Ans: GPTBot crawls content for model training and can also be used for real-time retrieval. OAI-SearchBot crawls specifically for ChatGPT’s live search feature. OpenAI confirms they are treated as separate crawlers with independent robots.txt rules. A site can block one while allowing the other.

Q4. How many websites are currently blocking GPTBot?

Ans: As of early 2026, 25% of the top 1,000 websites block GPTBot, up from 5% in early 2023. The majority of these are media publishers, academic institutions, and sites with paywalled content.

Q5. What is a hybrid robots.txt policy?

Ans: A hybrid policy allows AI crawlers to access public commercial content while blocking private or sensitive paths such as account dashboards, checkout flows, and API endpoints. 61% of enterprise sites use this approach.

Q6. Does blocking AI crawlers protect copyright?

Ans: robots.txt is a voluntary protocol. AI companies state in their documentation that they respect these directives, but compliance is not technically enforced. Legal protection for content requires terms of service, copyright registration, and, where necessary, litigation. The New York Times filed suit against OpenAI in December 2023 after its paywalled articles appeared in ChatGPT responses.

Q7. What is llms.txt and should I implement it?

Ans: llms.txt is an optional markdown file placed at the root domain that signals to AI systems which pages are most relevant. It is not a confirmed ranking signal for any AI platform. It adds semantic structure that may benefit inference-time retrieval and is straightforward to implement.

Q8. If I blocked AI crawlers six months ago, how long will recovery take?

Ans: Inference crawler recovery typically occurs within days to weeks after removing the block. Training crawler recovery depends on when the next model training cycle processes your content, which AI companies do not publish on a fixed schedule. The practical estimate is 6 to 18 months for training data to influence production model responses.

Related Resources

- How to Audit Your Website for AI Search Readiness – complete technical checklist covering crawl accessibility, schema, and entity optimization

- How LLMs Work: A Complete Guide – explains how training data becomes model knowledge and why crawl access affects long-term brand representation

- Free AI Readiness Analyzer – automated scan of crawler accessibility, entity clarity, and schema validation