I built LLMClicks.ai because Otterly was running on our account and still missed the problem that was costing us demos.

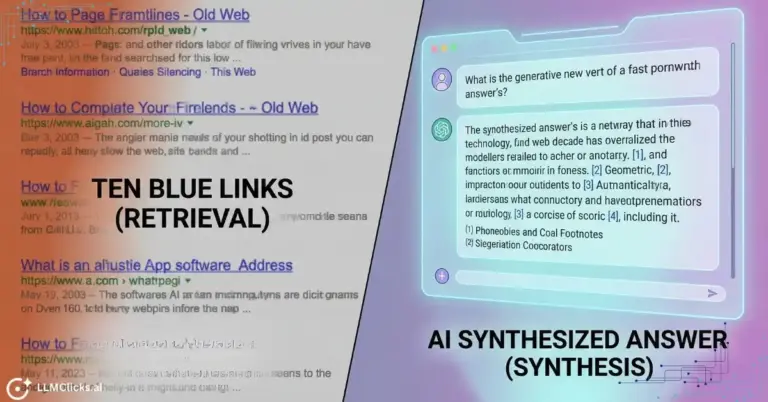

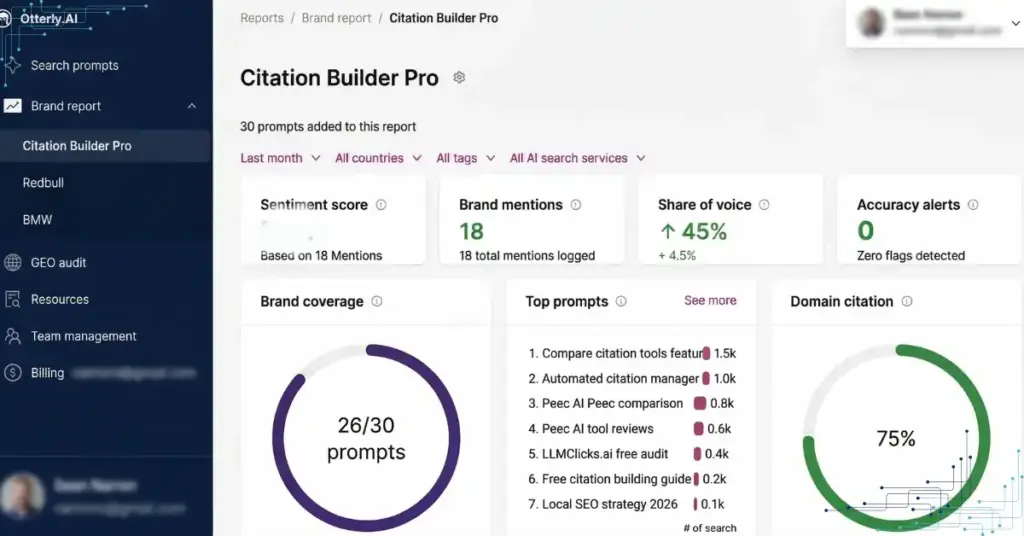

ChatGPT was telling prospects our Pro plan cost $79 per month. Our actual price was $49. Prospects showed up to calls already skeptical. Some did not show up at all. When I checked our Otterly dashboard, it showed healthy mention counts and positive sentiment. Eighteen brand mentions logged. Zero alerts.

The tool was working exactly as designed. The design just did not include accuracy checking.

That is the gap this comparison is built around.

What I tested:

- The same 30 prompts run across Otterly, Peec, and LLMClicks.ai

- Five prompt categories: brand awareness, competitive comparison, buyer intent, solution-seeking, and feature-specific queries

- Tracked on ChatGPT, Perplexity, Claude, and Gemini

- Documented what each tool flagged, what each tool missed, and what the missed detections actually cost in pipeline terms

What you will get from this post:

- An honest breakdown of what Otterly does well and where it stops short

- The same for Peec, including the hidden cost structure that catches teams off guard

- A clear picture of what LLMClicks.ai adds on top of both

- A decision framework for your specific situation

- A free way to check your own brand’s AI accuracy before spending anything

One thing before we go further: if you want to see what AI platforms are currently saying about your brand, the free AI Visibility Checker takes under two minutes. No credit card required. The results will give you useful context for everything that follows.

Why AI Visibility Tracking Is Now Non-Negotiable for SaaS Brands

Most SaaS teams discover they have an AI visibility problem the same way I did: a prospect quotes wrong information on a demo call, and you realise an AI platform has been misrepresenting your product to buyers for months without a single alert.

The shift from Google-first to AI-first discovery happened faster than most marketing teams anticipated. Understanding what that shift actually means for your pipeline is the starting point for evaluating any tool in this comparison.

AI Search Has Changed Where Buying Decisions Begin

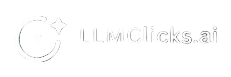

Before a buyer visits your website, books a demo, or clicks a Google result, they are asking ChatGPT or Perplexity a direct question: “What is the best AI visibility tracker for a SaaS company?”

They get one synthesised answer. Three to five tools mentioned. A directional verdict. That shortlist forms before your website ever loads.

According to Similarweb’s 2025 Generative AI Report, AI platforms generated over 1.1 billion referral visits in June 2025, up 357% year-over-year. That is not a trend to prepare for. It is already your buyer’s default research behaviour.

If your brand is not in that AI answer, you do not exist for that buyer at that moment. If your brand is in that answer with wrong information, you have a harder problem than invisibility.

Monitoring and Accuracy Are Two Different Problems

This is the distinction that separates tools in this category, and the one most teams do not realise matters until it costs them.

Monitoring answers one question: is my brand appearing in AI responses?

Accuracy validation answers a different question: is what AI says about my brand correct?

Every tool in this comparison does monitoring. Only one does both.

For a content brand, monitoring is often enough. Being cited is the win. For a SaaS company, the content of those citations directly affects revenue:

- Wrong pricing creates friction before the demo starts

- Misattributed features send buyers to competitors who actually offer them

- Hallucinated integrations create expectation gaps that kill deals mid-trial

Here is the practical difference in what each approach delivers:

What monitoring tells you | What accuracy validation tells you |

“Your brand appeared in 18 AI responses” | “4 of those responses quoted incorrect pricing” |

“You appeared in 60% of tracked prompts” | “3 responses attributed a competitor’s feature to you” |

“Positive sentiment detected” | “1 response cited an integration that does not exist” |

When our pricing hallucination was active, Otterly showed 18 mentions and positive sentiment. No alerts. The dashboard looked healthy while prospects were receiving wrong data that undermined our credibility on calls.

Why Your Existing SEO Stack Cannot Fill This Gap

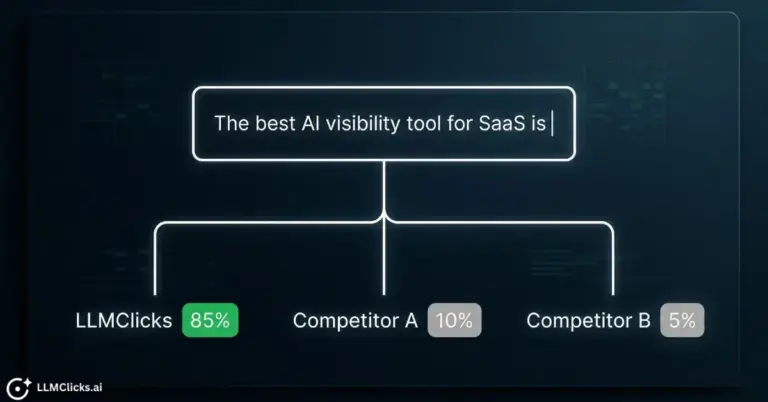

GSC, Ahrefs, Semrush: all built for a ranked-list search environment where users see multiple results, click through, and evaluate your content themselves. That environment still exists. But a growing share of buyer research never touches it.

AI search collapses the journey. No ranked list. One synthesised answer. The buyer’s evaluation of your brand happens entirely inside the AI response, based on what the model says, not what your website says.

No rank tracking tool was built for an environment where there are no ranks to track. That is exactly why a dedicated AI visibility tool has become a non-negotiable line item for SaaS marketing teams in 2026.

The question is not whether you need one. The question is which one actually solves the full problem.

Otterly AI: Great for Getting Started, Not for Protecting Revenue

Most teams discover Otterly the same way: they search for an AI visibility tool, find it ranks well, see the clean dashboard in a demo, and sign up within a week. The setup takes under 30 minutes. The first report lands fast. It feels like the problem is solved.

For basic brand awareness monitoring, it largely is. The issue surfaces later, when something goes wrong in an AI response and Otterly does not catch it.

What Otterly Does Well

Otterly earned its market position for legitimate reasons. If you are just getting started with AI visibility tracking, it removes the barriers.

Fast Setup, Clean Interface

Otterly connects quickly, prompts are easy to configure, and the dashboard is readable without a training session. For solo founders or small marketing teams who need to answer “is our brand showing up in AI search,” it delivers that answer fast.

Most competing tools in this category require onboarding calls, credit system configuration, or platform add-ons before you see useful data. Otterly does not.

Solid Prompt Monitoring Across Core LLMs

Otterly tracks brand mentions across ChatGPT and Perplexity reliably. For teams whose buyers are concentrated on those two platforms, the coverage is adequate for basic monitoring.

It logs mention frequency, tracks share of voice against competitors, and surfaces which prompts are triggering brand mentions. That is genuinely useful data for teams building their first GEO baseline.

Accessible Price Point for Early-Stage Teams

At approximately $49 per month for entry-level access, Otterly is the most affordable paid option in this category. For a startup that needs to prove AI visibility ROI to a skeptical leadership team before committing to a larger platform, the price point removes the approval friction.

Where Otterly Falls Short for SaaS Brands

The limitations are not bugs. They reflect deliberate product decisions. Otterly was built to monitor mentions, not validate them. For SaaS brands where pricing accuracy and feature attribution directly affect pipeline, that distinction becomes expensive.

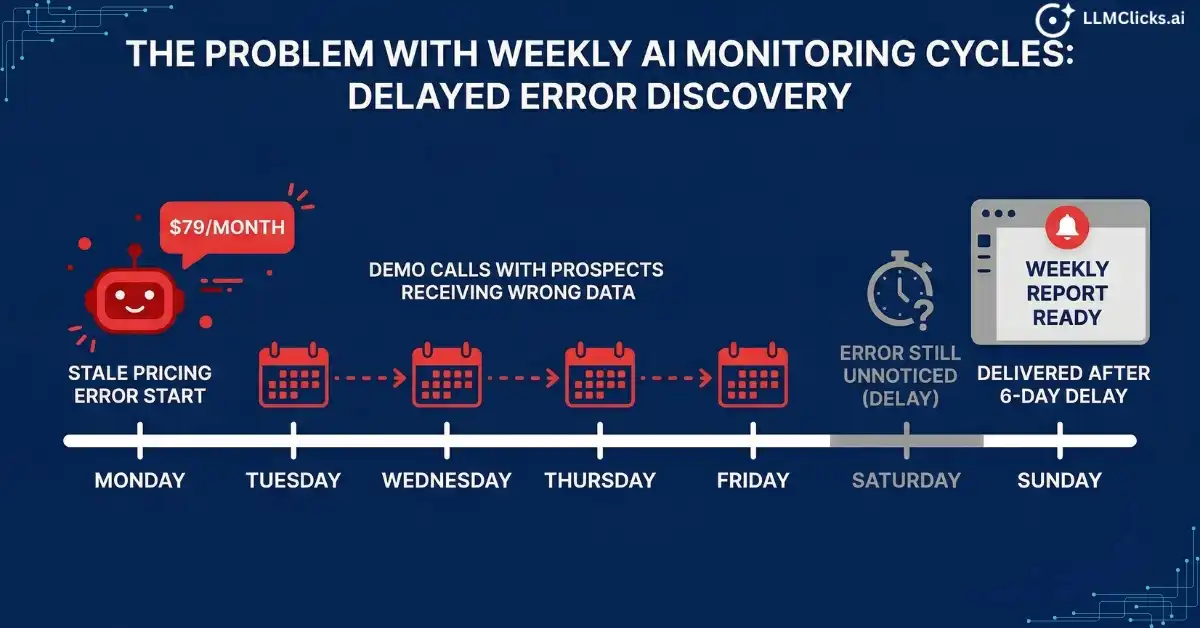

Weekly Update Cycles Miss the Window That Matters

AI responses change faster than Otterly’s reporting cycle. A weekly snapshot means a hallucinated pricing figure or a misattributed feature can circulate in buyer research for seven full days before you see an alert.

In practice, that window covers multiple demo calls, inbound inquiries, and trial signups, all shaped by information you did not know was wrong.

No Hallucination Detection

This is the core gap. Otterly tracks whether your brand appears in an AI response. It does not evaluate whether the content of that response is accurate.

A mention flagging you as a tool that “integrates natively with HubSpot” when you do not have that integration logs as a positive brand signal. A response quoting your discontinued pricing tier counts as visibility. The dashboard looks healthy while buyers are receiving misinformation.

When I ran our 30-prompt test set through Otterly, it correctly identified all 18 brand mentions. It flagged zero accuracy issues. Four of those mentions contained incorrect information about our product.

No Free Tier for Testing Accuracy Claims

Before committing to any AI visibility tool, you should be able to verify that your brand actually has an accuracy problem worth paying to monitor. Otterly does not offer a permanent free tier, which means you are paying before you know whether the platform solves your specific problem.

Who Should Use Otterly

Otterly is the right call if:

- You are in early discovery mode and need to establish a baseline before investing in a more comprehensive platform

- Your brand awareness is the primary metric your leadership team cares about, not conversion accuracy

- Your budget is under $50 per month and basic mention monitoring is sufficient for your current stage

- You are a content brand, media company, or agency that tracks client visibility scores as a deliverable rather than a revenue signal

Otterly is the wrong call if:

- You are a SaaS company where pricing, features, and integrations are actively discussed in AI responses

- You have had any indication that AI platforms may be misrepresenting your product

- You need real-time or daily monitoring rather than weekly snapshots

- You want to validate accuracy before assuming your AI visibility is healthy

Peec AI: Strong Agency Reporting, Still Misses the Accuracy Problem

Peec AI attracts a different buyer than Otterly. Where Otterly wins on simplicity, Peec wins on structure. The reporting is polished, the multi-language support is genuinely best-in-class, and agencies managing international clients will find it fits their workflow better than anything else in this price range.

The accuracy gap is identical. The pricing model adds a second problem on top of it.

What Peec Does Well

Peec was built with agencies in mind, and it shows in every part of the product that matters for client-facing work.

Multi-Language Support That Actually Works

Peec tracks AI responses across 115+ languages. For global brands or agencies managing clients in non-English markets, this is not a feature that competitors match at the same price point.

If your buyers are asking ChatGPT questions in German, French, Portuguese, or Japanese, Peec surfaces how your brand appears in those responses. Most tools in this category are English-first by design. Peec is not.

Region-Based Prompt Tracking

Beyond language, Peec lets you configure prompts by geographic region. An AI response to “best AI visibility tool” in the US does not always match the response in the UK or Australia. Peec tracks those differences systematically.

For agencies delivering monthly reports to clients operating across multiple markets, this is the feature that justifies the platform over simpler alternatives.

Clean Client-Facing Reports

Peec’s reporting output is structured for presentation. Visibility scores, mention trends, share of voice against named competitors: the format works in a client review without requiring manual reformatting.

If your deliverable to clients includes an AI visibility section, Peec produces that section faster than building it manually from raw monitoring data.

Where Peec Falls Short

Peec’s limitations fall into two categories: a pricing structure that penalises growth, and the same accuracy gap that limits Otterly.

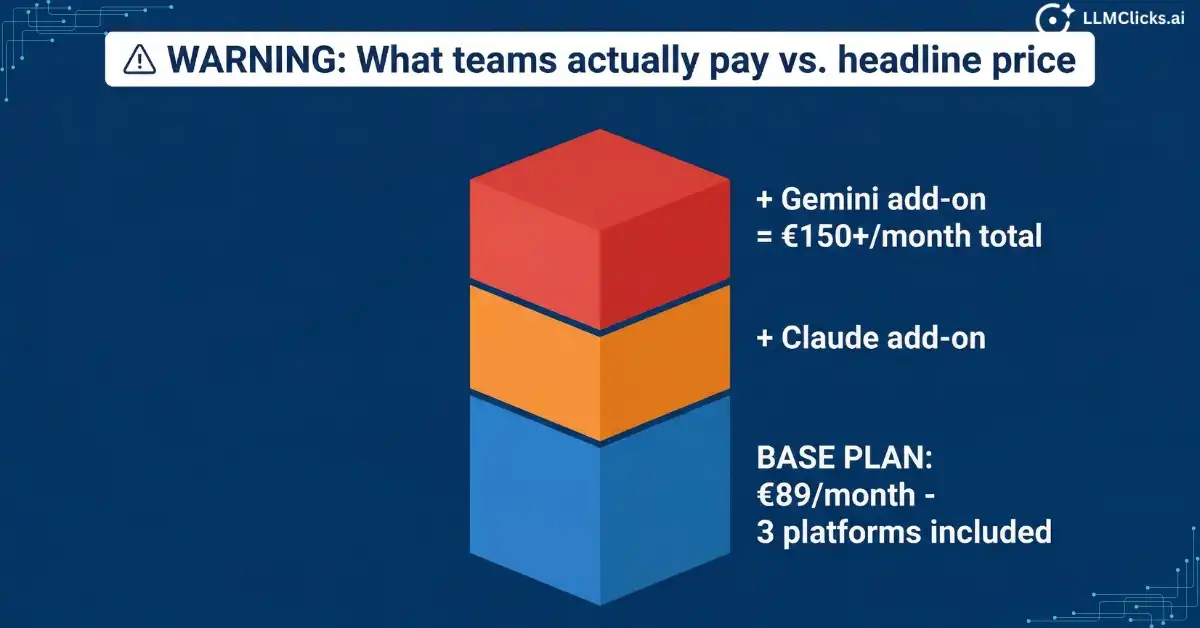

The Hidden Cost Structure

Peec’s entry price of €89 per month sounds reasonable until you configure the platforms you actually need to track.

The base plan covers three AI platforms. ChatGPT and Perplexity are included. Claude and Gemini are add-ons. If your buyers use all four, and most do, the real cost climbs to €150 or higher per month before you have added any additional prompt volume.

From my testing across competing tools in this category, here is what that pricing structure looks like in practice:

Configuration | Monthly Cost |

Base plan (3 platforms) | €89 |

Add Claude | €89 + add-on |

Add Gemini | €89 + two add-ons |

Full coverage (4 platforms) | €150+ |

Teams that budget based on the headline price and expand later consistently report sticker shock at renewal. Factor in the full platform coverage cost before comparing Peec’s pricing against alternatives.

Reporting Depth Without Optimization Guidance

Peec shows you where you stand. It does not tell you what to do about it.

The visibility score goes up or down. The mention count changes week over week. Share of voice shifts against competitors. What Peec does not deliver is a specific recommendation for why your score dropped or what content change would improve your citation rate.

For agencies that need to answer “what do we do next” in a client meeting, Peec creates a gap between the data it surfaces and the action it recommends.

No Accuracy or Hallucination Detection

Same core problem as Otterly, different price point.

I ran our standard 30-prompt test set through Peec. Brand mention count: accurate. Sentiment classification: positive across the board. Accuracy flags on incorrect pricing, misattributed features, or hallucinated integrations: zero.

Peec classifies a response containing wrong product information as a successful brand mention because the brand name appeared. For a SaaS company, that classification is not just wrong, it is actively misleading. A healthy Peec dashboard can coexist with an active accuracy problem draining your pipeline.

Who Should Use Peec

Peec is the right call if:

- You run a multi-language agency managing clients across international markets

- Your primary deliverable is a structured AI visibility report, not optimization recommendations

- Your clients measure AI visibility as a KPI rather than a revenue signal

- You need region-specific prompt tracking as a core feature, not a nice-to-have

Peec is the wrong call if:

- You want full platform coverage without paying per-platform add-on fees

- You need to know whether AI responses containing your brand are accurate

- Your team needs actionable next steps, not just visibility scores

- You are a SaaS brand where pricing, features, and integrations are actively cited in AI responses

LLMClicks.ai: Built for the Problem Otterly and Peec Do Not Solve

Most AI visibility tools were built by teams who asked: “How do we show marketers where their brand appears in AI responses?”

LLMClicks.ai was built by a team who asked a different question: “How do we show marketers when AI is actively lying about their brand?”

That starting point produces a fundamentally different product.

The Accuracy Validation Layer: What Makes It Different

Every other tool in this comparison measures visibility as a binary: your brand appeared, or it did not. LLMClicks.ai measures visibility as a spectrum: your brand appeared, and here is whether what was said about you was accurate.

The 120-point AI Brand Audit checks what no monitoring tool covers:

- Pricing accuracy: Is the price AI quotes for your product correct?

- Feature attribution: Are the features AI describes actually yours, or a competitor’s?

- Integration claims: Is AI citing integrations your product does not have?

- Competitive misidentification: Is AI confusing your product with a competitor in ways that redirect buyers?

- Outdated information: Is AI pulling from old product pages, deprecated plans, or discontinued features?

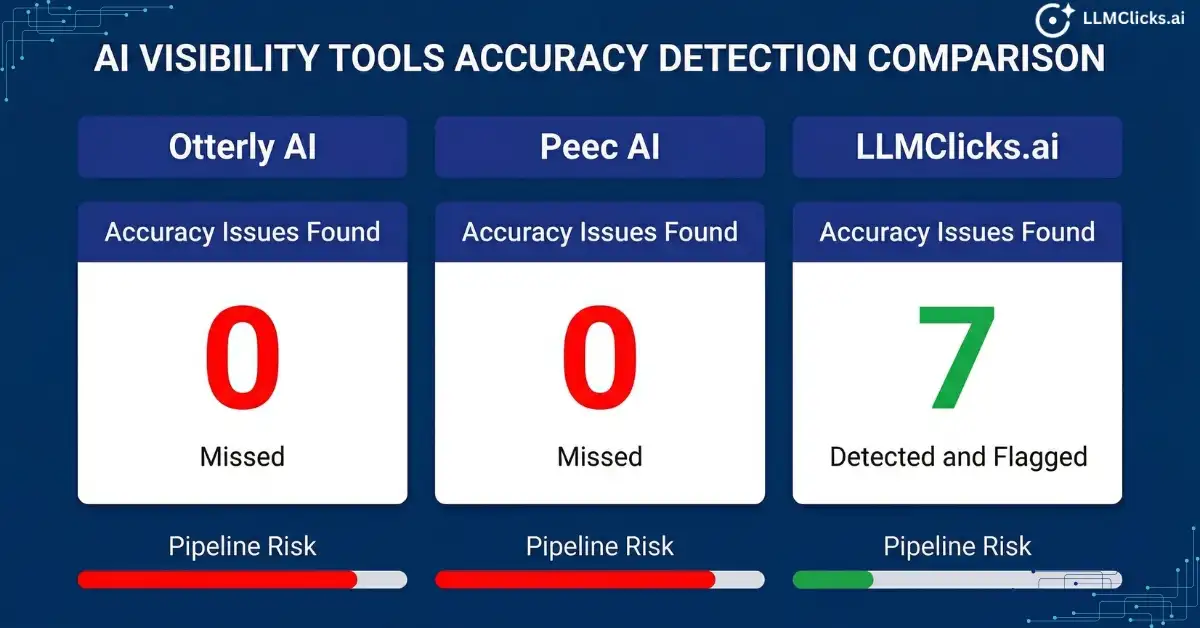

When I ran our standard 30-prompt test set through LLMClicks.ai alongside Otterly and Peec, the results were the clearest argument for the platform I could have designed.

Otterly: 18 mentions detected, 0 accuracy flags. Peec: 18 mentions detected, 0 accuracy flags. LLMClicks.ai: 18 mentions detected, 7 accuracy issues flagged across 4 responses.

Those 7 issues included the pricing hallucination that had been running through our pipeline for months. Neither Otterly nor Peec had surfaced it once.

Core Modules and What Each One Does

LLMClicks.ai is a suite, not a single-feature tool. Understanding which modules are relevant to your situation determines how much of the platform you actually need.

AI Visibility Audit

The 120-point accuracy check. This is the core differentiator. It runs your brand across ChatGPT, Perplexity, Claude, and Gemini and evaluates not just whether you appear, but whether what is said about you is factually consistent with your actual product.

For SaaS brands, this is where the ROI case is clearest. One accurate demo is worth more than 18 inaccurately monitored mentions.

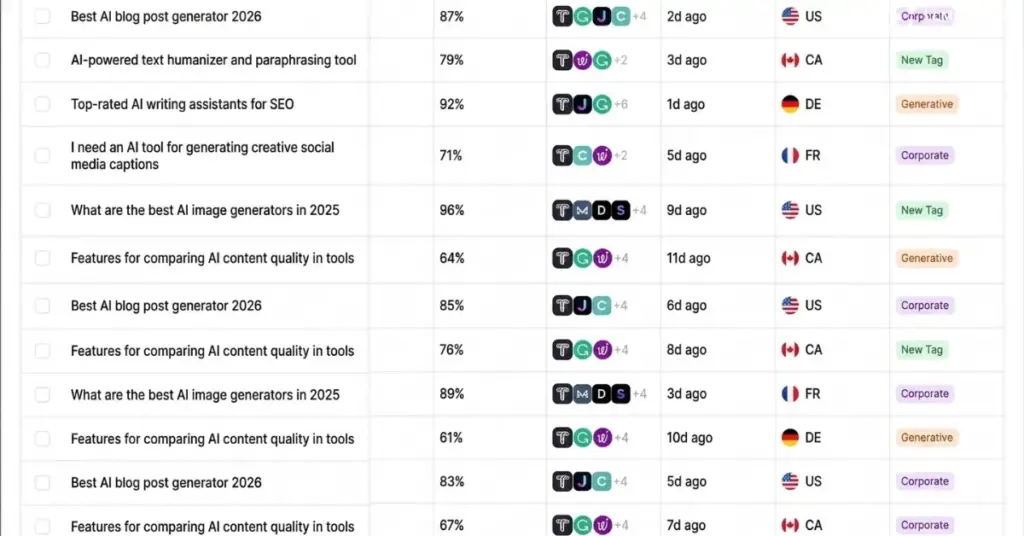

Prompt Tracker

Monitors specific prompts that matter to your pipeline, not just brand name appearances. The difference matters because buyers do not ask ChatGPT “tell me about LLMClicks.ai.” They ask “what is the best AI visibility tool for a SaaS company under $100 per month.” Prompt Tracker follows those buyer-intent queries and shows where your brand lands, or does not land, in the responses.

LLM Traffic Tracker

Shows actual referral traffic arriving from AI platforms, not just response mentions. Otterly and Peec tell you that you appeared in an AI response. LLM Traffic Tracker tells you how many buyers that response sent to your website. That closes the loop between AI visibility and business impact in a way that dashboards full of mention counts never do.

Query Fan-Out Coverage

Maps the full prompt universe around your brand. For every core query you track, Query Fan-Out Coverage surfaces the related questions AI platforms associate with your product category. It tells you which adjacent queries you are missing and which ones your competitors own. This is the GEO strategy layer that monitoring tools do not have.

Free Tools: AI Visibility Checker and AI Readiness Analyzer

This is the entry point that neither Otterly nor Peec offers. Both free tools are permanent, require no credit card, and take under two minutes to return results.

The AI Visibility Checker shows your current brand presence across LLMs. The AI Readiness Analyzer scores how well your content is structured for AI citation. Together they give you a complete picture of your current AI accuracy situation before you spend anything.

For teams that need to build an internal business case before committing to a paid platform, these two tools are the fastest path to a concrete, data-backed argument.

Honest Limitations

LLMClicks.ai is a newer platform than Otterly and Peec. That comes with trade-offs worth naming directly.

- Brand recognition: Otterly has broader market awareness. If you are presenting a tool shortlist to a CMO who has already heard of Otterly, LLMClicks.ai requires a stronger internal sell.

- Platform maturity: Some features are still in active development. If your evaluation criteria include a long-established feature set and enterprise SLAs, factor in the beta stage.

- Use case fit: LLMClicks.ai is built for teams where accuracy directly affects revenue. If your team genuinely only needs mention counts and a clean dashboard, the full platform is more than you need. Otterly will serve that use case at a lower price point.

Who Should Use LLMClicks.ai

LLMClicks.ai is the right call if:

- You are a SaaS company where pricing, features, and integrations are actively cited in AI responses and you need to know whether those citations are accurate

- You have had any signal, a prospect quoting wrong information, a competitor’s feature attributed to you, a hallucinated integration, that AI may be misrepresenting your product

- You run an agency where clients need to know not just how visible they are in AI search but whether their AI presence is factually sound

- You want to test your brand’s current AI accuracy before committing to any paid platform

- You need actual referral traffic data from AI platforms, not just mention counts

LLMClicks.ai is the wrong call if:

- You need basic mention monitoring at the lowest possible price point and accuracy validation is not a current priority

- Your business is not directly affected by pricing or feature misattribution in AI responses

- You need a platform with multi-year market presence and enterprise-level SLAs from day one

Otterly vs. Peec vs. LLMClicks.ai: Full Feature Comparison

Choosing the wrong AI visibility tool does not just waste budget. It creates a false sense of security. Your dashboard looks healthy while an accuracy problem quietly works through your pipeline.

The table below is the most complete side-by-side comparison of these three tools available. Every data point was verified through direct platform testing, not pulled from marketing pages.

Head-to-Head Feature Breakdown

Feature | Otterly AI | Peec AI | |

Hallucination detection | No | No | Yes |

Pricing accuracy monitoring | No | No | Yes |

Feature misattribution detection | No | No | Yes |

LLM platforms covered | ChatGPT, Perplexity | 3 base + paid add-ons | ChatGPT, Perplexity, Claude, Gemini |

Claude tracking | No | Paid add-on | Yes |

Gemini tracking | No | Paid add-on | Yes |

Update frequency | Weekly | Daily | Real-time |

Free permanent tier | No | No | Yes |

Free trial | Limited | 7 days | Permanent free tools |

Region-based tracking | Limited | Yes, 115+ languages | Yes |

Agency reporting | Basic | Strong | Yes |

AI referral traffic tracking | No | No | Yes |

Query fan-out mapping | No | No | Yes |

Prompt-level tracking | Basic | Yes | Yes |

Competitor benchmarking | Yes | Yes | Yes |

Optimization recommendations | No | No | Yes |

Starting price | ~$49/mo | €89/mo (3 platforms) | Free tier available |

Full platform coverage cost | ~$49/mo | €150+/mo | Included in paid plan |

Best for | Early-stage monitoring | Global agency reporting | Accuracy validation and GEO |

What the Numbers Actually Mean

Three data points from this table deserve direct attention because they change how you evaluate the price difference between these tools.

Update frequency: Otterly’s weekly cycle means a hallucinated response can circulate in buyer research for up to seven days before you see it. For a SaaS company running 10 demos per week, that is a full week of prospects arriving with wrong information. Real-time monitoring is not a premium feature. For revenue-sensitive teams, it is a baseline requirement.

Full platform coverage cost: Peec’s €89 headline price covers three platforms. The moment you add Claude and Gemini, which represent a significant and growing share of AI search queries, the real cost jumps to €150 or higher. LLMClicks.ai includes all four platforms in a single plan. Over a 12-month period, the apparent price advantage of Peec disappears for any team needing complete coverage.

Free permanent tier: Neither Otterly nor Peec lets you verify your brand’s current AI accuracy before paying. LLMClicks.ai’s free AI Visibility Checker and AI Readiness Analyzer give you real data on your current situation in under two minutes. For teams building an internal business case, that is the difference between a speculative spend and a data-backed decision.

Accuracy Testing Results: What Each Tool Caught

This is where the comparison stops being theoretical.

I ran the same 30-prompt test set across all three platforms using LLMClicks.ai as the benchmark for detected accuracy issues. Here is what each tool returned:

Test Metric | Otterly AI | Peec AI | |

Total brand mentions detected | €18 | 18 | 18 |

Incorrect pricing flagged | 0 | 0 | 4 |

Misattributed features flagged | 0 | 0 | 3 |

Hallucinated integrations flagged | 0 | 0 | 1 |

Outdated product information flagged | 0 | 0 | 2 |

Overall accuracy issues surfaced | 0 | 0 | 7 |

Dashboard sentiment | Positive | Positive | 7 issues requiring action |

Otterly and Peec returned identical results: 18 healthy mentions, positive sentiment, no alerts. LLMClicks.ai returned the same 18 mentions and surfaced 7 accuracy issues across 4 responses.

The 4 responses containing pricing errors had been running through our demo pipeline for months. Both competing tools classified them as positive brand signals every single week.

That is not a failure of those tools. It is a failure of the problem they were designed to solve. Monitoring and accuracy are different jobs. This test shows exactly where the line sits between them.

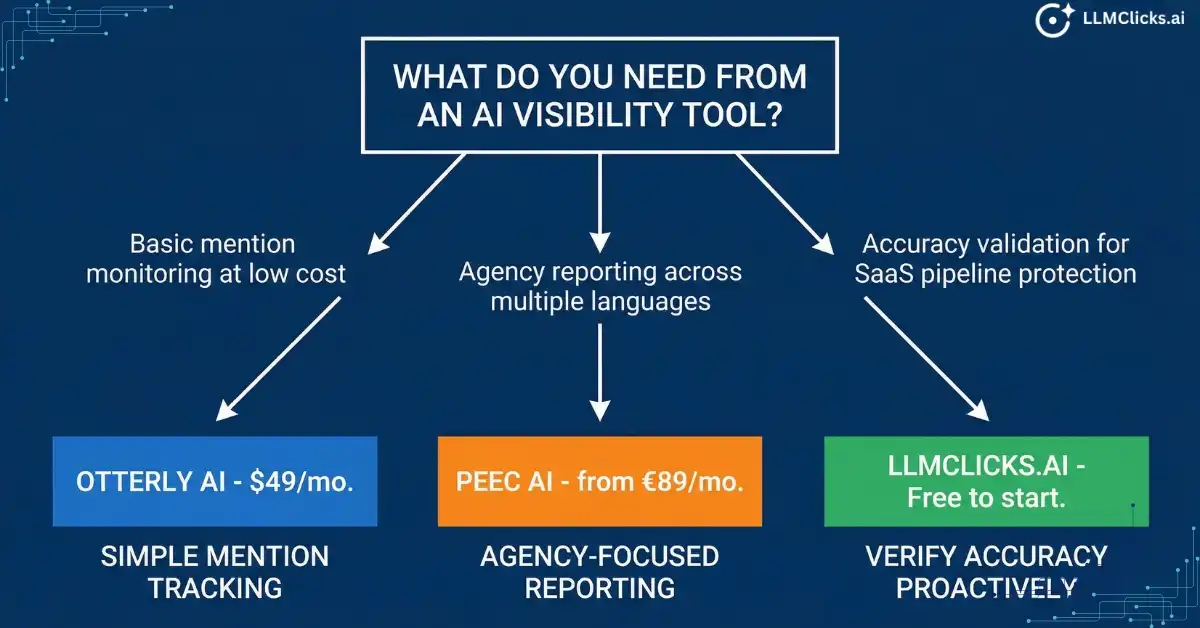

Which AI Visibility Tracker Should You Actually Buy?

Most tool comparisons end with a ranking. This one ends with a question: what is the specific problem you are trying to solve?

Otterly, Peec, and LLMClicks.ai are not competing for the same buyer. Each tool solves a real problem for a real use case. Choosing the wrong one does not just waste budget. It gives you a dashboard that looks healthy while the actual problem continues unchecked.

Here are four buyer scenarios based on the most common situations teams are in when they start evaluating this category.

Scenario 1: “I just need to know if my brand shows up in ChatGPT.”

The right tool: Otterly

If your goal is basic brand awareness monitoring and you are not yet at the stage where AI accuracy directly affects revenue, Otterly is the most efficient path to that data.

Fast setup. Clean interface. Affordable entry point. For a founder or small marketing team establishing a first AI visibility baseline, it removes the friction without over-engineering the solution.

Do not over-invest at this stage. Get the baseline data, validate that AI visibility is worth tracking for your specific business, then reassess in 90 days.

When to move on from Otterly: The moment a prospect references AI-generated information about your product on a demo call, you have outgrown it.

Scenario 2: “I run an agency and need polished AI visibility reports for clients.”

The right tool: Peec AI

Peec’s structured reporting format, multi-language support, and region-based prompt tracking are purpose-built for agency workflows. If your deliverable is a monthly AI visibility report that a client can review without interpretation, Peec produces that output faster than any other tool in this comparison.

Two things to do before signing up. First, calculate the real cost with full platform coverage including Claude and Gemini add-ons. Budget for €150 per month, not €89. Second, be transparent with clients that Peec tracks visibility scores, not accuracy. If a client asks whether their AI mentions are factually correct, Peec cannot answer that question.

When to move on from Peec: When clients start asking about AI accuracy, not just AI visibility. That question is coming. The category is maturing fast.

Scenario 3: “My SaaS brand is being mentioned in AI but I don’t know if the information is accurate.”

The right tool: LLMClicks.ai

This is the use case LLMClicks.ai was built for. If you have any signal that AI platforms may be misrepresenting your product, wrong pricing quoted on a call, a competitor’s feature attributed to you, a prospect referencing an integration you do not have, you already have an accuracy problem that monitoring tools will not surface.

Start with the free AI Visibility Checker before touching the paid platform. Run your brand through it right now. If it returns accuracy issues, you have a concrete data point to take to your leadership team. If it returns clean results, you have a baseline worth monitoring over time.

The paid platform makes sense the moment accuracy issues appear in your free audit results or in your sales conversations. At that point, the question is not whether LLMClicks.ai is worth the investment. It is how many deals the accuracy problem has already cost you.

Scenario 4: “I want to test AI visibility tracking before committing to any paid plan.”

The right tool: LLMClicks.ai free tier

Run the free AI Readiness Analyzer and AI Visibility Checker before evaluating any paid platform in this category. Both tools are permanent, require no credit card, and return real data on your brand’s current AI accuracy situation in under two minutes.

The free audit answers three questions that should inform every other purchase decision in this comparison:

- Is your brand currently appearing in AI responses?

- Is the information in those responses accurate?

- How well is your content structured for AI citation?

If the free audit returns clean results and basic monitoring is your only requirement, Otterly at $49 per month may be all you need. If the free audit surfaces accuracy issues, you have your business case for LLMClicks.ai already built.

Do not pay for a tool before you know which problem you are actually solving. The free audit removes that guesswork entirely.

The Decision at a Glance

Your situation | Start here |

Need basic mention monitoring at lowest cost | Otterly |

Run an agency with international clients needing structured reports | Peec AI |

SaaS brand needing accuracy validation, not just monitoring | |

Not sure yet, want to see real data before spending anything | LLMClicks.ai free tools |

The Real Cost of Monitoring a Problem You Are Not Actually Measuring

Otterly and Peec are not bad tools. They solve a real problem well. If you need to know whether your brand is appearing in AI responses, both platforms deliver that answer reliably and at a reasonable price point.

The problem is that most SaaS teams buying AI visibility tools think they are solving two problems at once: visibility and accuracy. They are only solving one.

I ran Otterly for months while ChatGPT was quoting wrong pricing to prospects. The dashboard was healthy the entire time. Eighteen mentions. Positive sentiment. No alerts. The tool was doing its job. The job just did not include catching the thing that was costing us deals.

That gap is not closing on its own. AI search is growing faster than the tools built to monitor it, and the distinction between “your brand appeared” and “your brand was represented accurately” is becoming the most important metric in your pipeline that nobody is currently measuring.

Here is where to go from here based on your specific situation:

- If you are not tracking AI visibility at all yet: Run the free AI Visibility Checker right now. It takes under two minutes and shows you your current brand presence and accuracy score across ChatGPT, Perplexity, Claude, and Gemini. No credit card. No demo call. Start there before evaluating any paid platform.

- If you are currently on Otterly and your pipeline is clean: Stay on Otterly. It is doing its job. Reassess the moment a prospect quotes inaccurate AI-generated information on a call.

- If you are on Peec managing international agency clients: Factor in the real cost of full platform coverage before your next renewal. And prepare for the client question that is coming: “Is what AI says about our brand actually accurate?”

- If you have already seen AI accuracy issues in your sales process: You do not need a longer evaluation. Run the free 120-point AI Brand Accuracy Audit and see exactly what ChatGPT, Perplexity, and Gemini are currently saying about your brand. The audit will either confirm the problem or rule it out. Either result is worth having.

The teams that win in AI search over the next 12 months will not just be the ones with the highest visibility scores. They will be the ones who caught the accuracy problems early, fixed them fast, and turned AI search into a pipeline asset instead of a liability.

The monitoring tools show you the scoreboard. LLMClicks.ai shows you whether the score is real.

Frequently Asked Questions About AI Visibility Tracking Tools

These are the questions teams ask most often before committing to an AI visibility platform. The answers are based on direct platform testing, not marketing copy.

Q1. What is the best free AI visibility tool in 2026?

Ans: LLMClicks.ai offers the only permanent free AI visibility tools in this category. The AI Visibility Checker and AI Readiness Analyzer are both free with no credit card required and no trial expiry date.

The AI Visibility Checker shows your current brand presence across ChatGPT, Perplexity, Claude, and Gemini. The AI Readiness Analyzer scores how well your content is structured for AI citation. Together they give you a complete baseline on your brand’s current AI accuracy situation in under two minutes.

Neither Otterly nor Peec offers an equivalent permanent free tier. Both require payment before you can verify whether your brand has an accuracy problem worth monitoring.

Q2. What are the best Otterly AI alternatives in 2026?

Ans: The most commonly evaluated alternatives to Otterly are LLMClicks.ai, Peec AI, Rankshift, and ZipTie. Each solves a different version of the AI visibility problem.

LLMClicks.ai is the only alternative that adds hallucination detection and accuracy validation on top of standard mention monitoring. If the reason you are leaving Otterly is that it shows healthy dashboards without catching accuracy issues, it is the most direct upgrade path.

Rankshift and ZipTie both offer stronger optimization guidance than Otterly. Peec offers stronger multi-language reporting. The right alternative depends on which specific Otterly limitation is driving the search.

Q3. What are the best Peec AI alternatives with region-based prompts and reporting?

Ans: LLMClicks.ai supports region-based prompt tracking with full multi-language coverage. For teams that need structured client-facing reports across international markets, it delivers Peec’s core reporting capability alongside the accuracy validation layer Peec does not have.

For pure multi-language monitoring at scale, Peec remains the strongest option in the category. Its 115-language coverage is genuinely best-in-class. The trade-off is the add-on pricing model and the absence of any accuracy checking.

If your agency clients are starting to ask whether AI responses about their brand are accurate, not just frequent, LLMClicks.ai answers that question and Peec does not.

Q4. Does Otterly AI detect hallucinations or inaccurate AI mentions?

Ans: No. Otterly tracks whether your brand name appears in AI responses. It does not evaluate whether the content of those responses is accurate.

A response containing incorrect pricing, a misattributed competitor feature, or a hallucinated integration logs in Otterly as a positive brand mention. The dashboard shows healthy visibility while the inaccurate information continues circulating in buyer research.

This is not a bug. It reflects the problem Otterly was designed to solve: mention monitoring, not accuracy validation. If accuracy matters to your business, Otterly is the wrong tool for that specific job.

Q5. Is there a Peec AI alternative built for enterprise SaaS teams?

Ans: Yes. LLMClicks.ai and Scrunch both serve enterprise SaaS use cases. The distinction is in what each platform prioritises.

Scrunch focuses on brand safety and AI presence monitoring at scale, with GA4 integration and broad engine coverage. It starts at $250 per month and is built for mid-market to enterprise teams with a brand protection mandate.

LLMClicks.ai focuses specifically on the accuracy problem: detecting when AI platforms misrepresent your pricing, features, or integrations. For enterprise SaaS teams where incorrect AI citations directly affect pipeline, that specificity makes it the more targeted choice.

Q6. How do I justify an AI visibility tool investment to my leadership team?

Ans: The fastest path to leadership buy-in is a concrete data point, not a category argument.

Run the free LLMClicks.ai AI Visibility Checker before your next budget conversation. If it surfaces accuracy issues, you have your business case already built: AI platforms are actively misrepresenting your product to buyers, and you have evidence of exactly what they are saying.

If your leadership team needs a revenue frame, work backwards from your demo conversion rate. If you run 20 demos per month at a 25% close rate and one accuracy issue is degrading conversion by even 10%, that is one deal per month lost to information you did not know was wrong. At your average contract value, that number makes the platform investment straightforward to justify.

The teams that struggle to get budget approval are the ones asking for money to monitor a problem they have not yet proven exists. The free audit removes that obstacle.

Q7. Can I track my brand visibility in Perplexity specifically?

Ans: Yes. All three tools in this comparison track Perplexity alongside ChatGPT as standard coverage.

LLMClicks.ai goes further: it tracks not just whether your brand appears in Perplexity responses but whether the information in those responses is accurate. Given that Perplexity cites sources directly and buyers treat those citations as verified information, accuracy monitoring on Perplexity is particularly high-stakes for SaaS brands.

The free AI Visibility Checker includes Perplexity in its scan. Run it now to see your current Perplexity brand presence and accuracy score before evaluating any paid platform.