Traditional keyword research is failing SaaS growth teams. We are no longer operating in an era of blind intuition and estimated search volumes. Search has evolved into a landscape of precise measurement and highly complex queries.

There is a fundamental difference between typing “agency project management tools” into a search engine and asking an AI platform a constrained question. Today, a buyer opens ChatGPT and prompts: “Which project management tool is best for a creative agency that needs client portals and integrated time tracking: Asana, Monday, or ClickUp?”

That is the difference between keyword research and prompt research. Google retrieves a list of links. Large Language Models synthesize a definitive answer.

Most SaaS marketers are still mapping their content to outdated, short-tail keyword intents. They are completely missing the crucial “synthesis” phase of the modern buyer journey. If your content is not engineered to answer these specific conversational prompts, the AI will simply recommend your competitor.

To win market share in 2026, you need a new playbook. You need a unified framework that bridges the gap between traditional SEO and Generative Engine Optimization (GEO).

This guide will show you exactly how to map legacy user intent to modern LLM prompts. You will learn how to engineer citation triggers, track your AI visibility metrics, and measure your exact Share of Voice against hard B2B SaaS industry benchmarks.

Why Keyword Research is Failing in the Era of LLMs

For the past decade, SEO teams have relied on keyword volume. We exported CSV files from legacy tools, found terms with high search volume and low difficulty, and built landing pages to match.

That model is permanently broken. Relying solely on traditional keyword research in 2026 will leave your brand invisible to high-intent buyers. Here is why the old playbook fails.

Data Availability vs. Historical Context

Keyword volume is a lagging indicator. It tells you what users searched six months ago.

In contrast, LLM prompts are conversational, highly volatile, and entirely context-driven. A B2B buyer does not type a static, two-word keyword into Claude or Gemini. They write a paragraph explaining their exact business problem.

Because prompt variations are infinite, traditional search volume metrics are useless for AI visibility. You cannot rely on historical search data to predict conversational outputs. Instead of optimizing for a single high-volume keyword, you must optimize for a cluster of conversational constraints.

Intent Recognition vs. Recommendation Triggers

Google ranks pages based on broad intent categories like Informational or Transactional. If a user searches “agency project management tools”, Google retrieves a list of listicles and homepage links.

Large Language Models do not retrieve links. They synthesize answers based on specific recommendation triggers.

When a user prompts an AI, they inject constraints into the query. They do not just ask for a tool. They ask: “Which project management tool integrates natively with Slack, offers client portal access, and costs under $20 per user for a 50-person creative agency?”

Google matches intent. AI matches constraints.

If your content only targets the broad keyword intent, the AI will ignore you. To win the citation and become the recommended solution, your content must explicitly feed the AI the exact constraint data it needs to synthesize the answer.

The 4-Step Prompt Mapping Framework for SaaS

To capture high-intent buyers in 2026, you must reverse-engineer how LLMs evaluate your product. You need to bridge the gap between what users actually want and the data AI needs to synthesize an answer.

Here is the exact four-step framework to map your content to AI prompts.

Step 1: Identify Audiences Through Constraint-Based Personas

Traditional SEO relies on broad demographics. AI SEO requires constraint-based personas.

When a user asks ChatGPT or relies on Perplexity for a recommendation, they feed the AI their exact operational bottlenecks. Let us look at the project management software vertical.

- Traditional Persona: Marketing agencies.

- Constraint-Based Persona: A 50-person creative agency that requires white-labeled client portals, native Slack integration, and integrated time tracking.

Stop targeting broad industries. Start mapping your content to the specific, technical constraints your product solves.

Step 2: Map Solutions to Decision-Stage Evaluation Criteria

Once you know the constraints, you must map them to the decision stage. Buyers use AI to do the heavy lifting of product comparison.

Transform your legacy keywords into the exact evaluation criteria users feed into their prompts. Take your bottom-of-funnel keyword “agency project management tools” and break it down into conversational comparison prompts:

- “Which project management tool has better client portals: Asana or Monday?”

- “Compare ClickUp and Asana for integrated time tracking and agency billing.”

- “What is the best Monday alternative for a creative team that needs strict SLA management?”

Your landing pages and blog posts must answer these specific, feature-level comparisons directly.

Step 3: Account for Query Fan-Out in Prompt Design

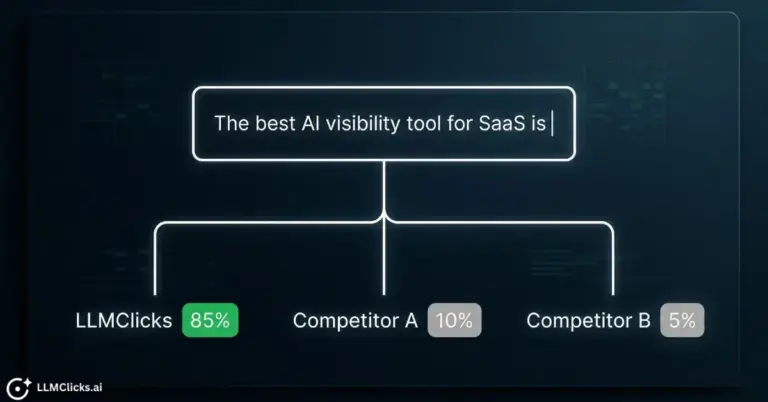

Large Language Models do not just process one question at a time. They use a mechanism called “query fan-out.”

When a buyer enters a complex prompt comparing Asana, Monday, and ClickUp, the AI breaks that single prompt into dozens of hidden sub-queries. It searches its training data and the live web for “Asana client portal features”, then “Monday time tracking pricing”, and finally “ClickUp SLA management reviews”.

If your website only targets the head term, you will miss the fan-out queries. You must build cluster content that answers every possible sub-query the AI generates during its synthesis process.

Step 4: Engineer Your Citation Triggers

Getting the AI to understand your product is not enough. You must force the AI to cite your brand as the definitive solution. You do this by engineering citation triggers.

AI models look for structured, verifiable data. Use these specific tactics to become the primary citation:

- Data Tables: Compare your features against competitors using clean HTML tables. LLMs extract table data much faster than paragraph text.

- Structured Schema: Implement robust Product and FAQ schema markup. Feed the exact pricing, integration, and feature constraints directly to AI crawlers like GPTBot.

- Third-Party Validation: Embed raw data from G2, Capterra, or Reddit directly onto your pages. AI trusts consensus. When you provide the external proof right next to your feature claims, the AI uses your page as the ultimate source of truth.

AI Visibility Metrics Benchmark: What is a "Good" Score?

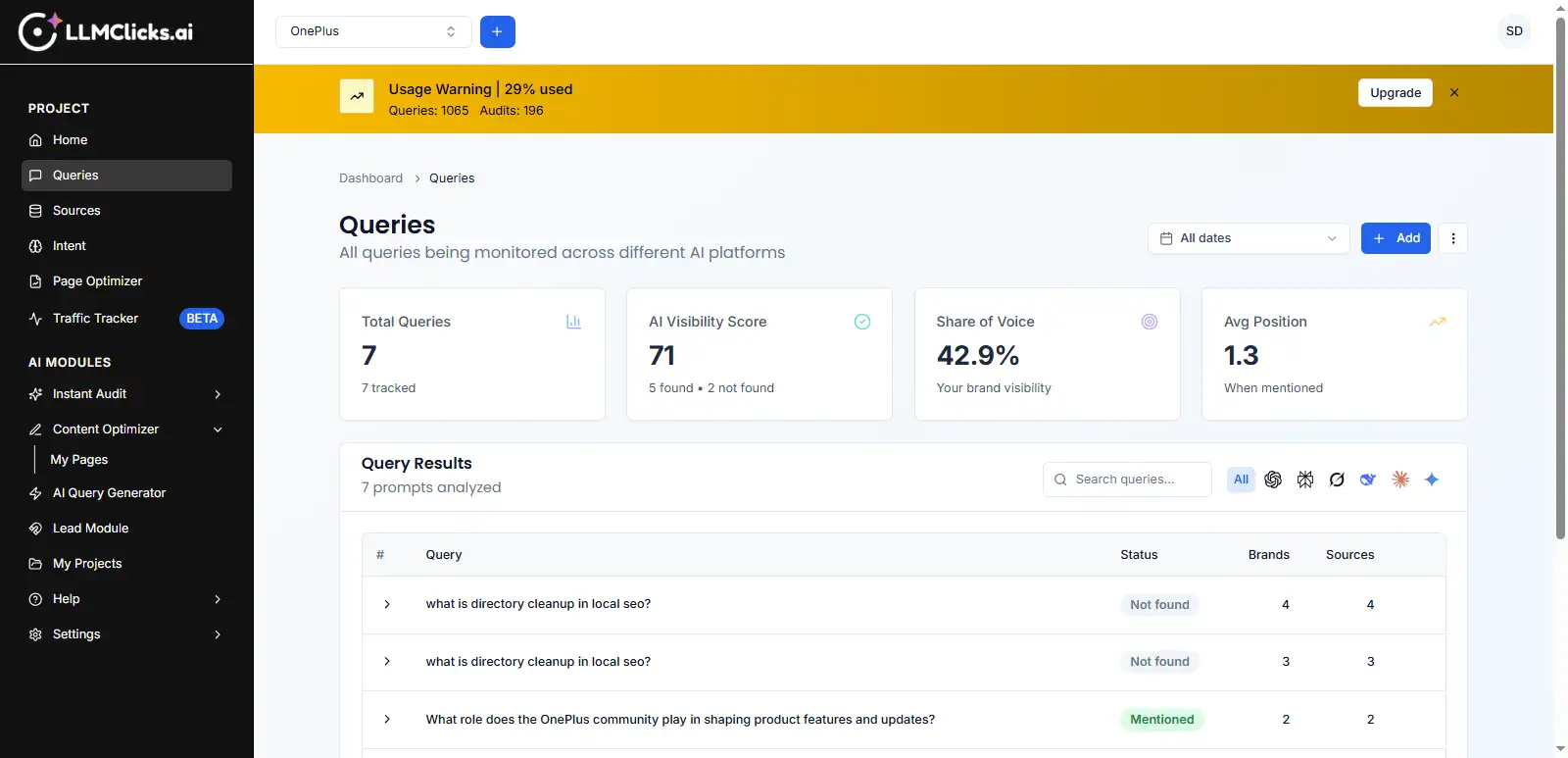

Once you map your intent to specific LLM prompts, you need to measure your success.

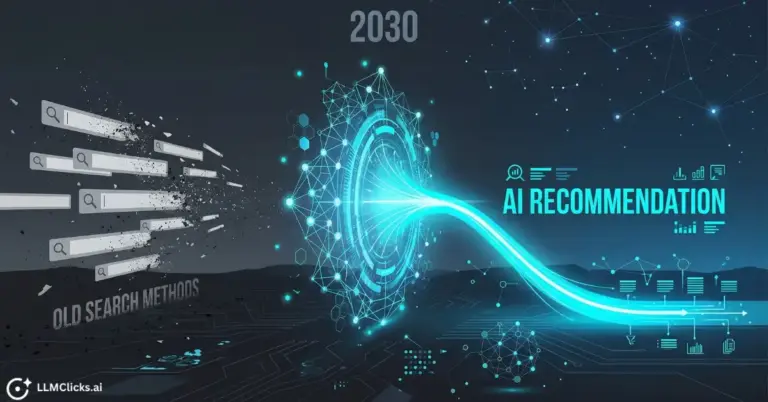

Traditional SEO relies on keyword rankings and organic traffic. AI SEO relies on Share of Voice (SOV). AI Share of Voice measures how often your brand is recommended across all major Large Language Models compared to your direct competitors. For a complete breakdown of the math, read our guide on how to measure AI visibility for your brand. It is the definitive metric for brand visibility in 2026.

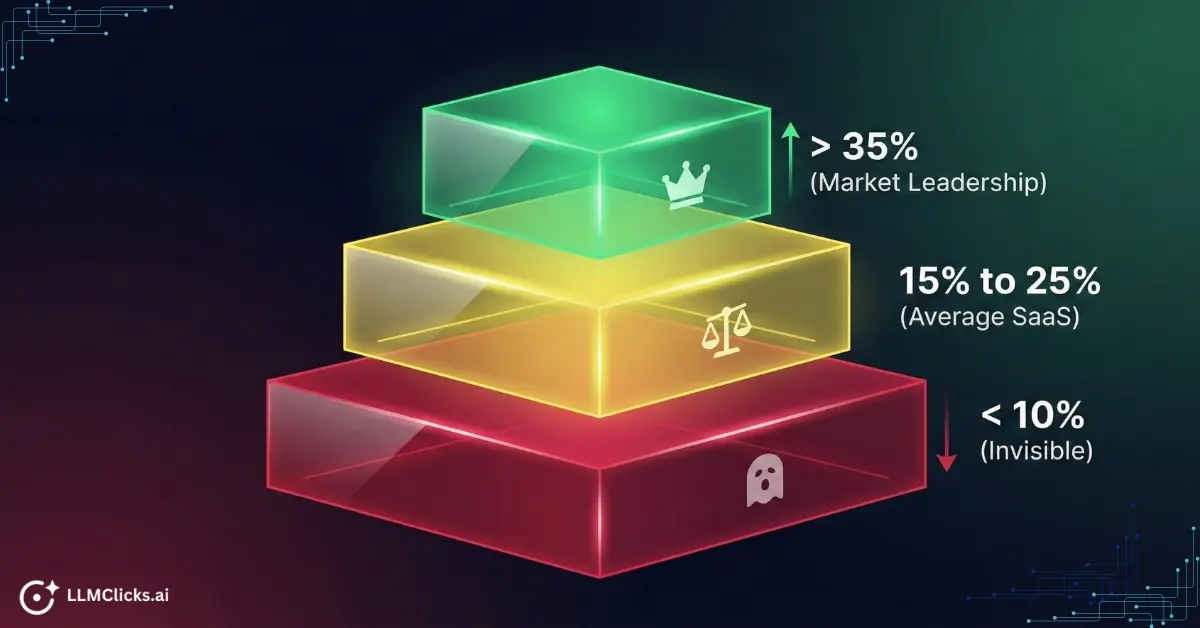

But what actually constitutes a good score? Based on current data for the B2B SaaS sector, here are the hard benchmarks you need to evaluate your performance.

Poor: Under 10%

At this level, your brand is effectively invisible in the broader market conversation. You are likely being drowned out by dominant players. Your Share of Mind is negligible.

If you run your target prompts through an AI visibility tracker tool and your SOV is under 10%, you do not just have a marketing problem. You have a critical technical SEO issue. LLM crawlers are either blocked from reading your site, or your content entirely lacks the necessary citation triggers.

Average: 15% to 25%

This is the standard range for established and healthy B2B SaaS companies. Scoring in this tier indicates that you are a known entity.

You are consistently appearing in AI search results, social mentions, and media coverage alongside your primary competitors. This is the baseline you must reach to ensure your sales team is not fighting an uphill battle during vendor evaluations.

Excellent: Over 35%

Reaching this threshold signals absolute Market Leadership.

In B2B SaaS, achieving an SOV significantly higher than your actual market share is a leading indicator of future revenue growth. This concept is known as Excess Share of Voice (ESOV). Brands in this tier do not just participate in the AI narrative. They dictate it.

The Implementation Timeline and Reporting Cadence

You cannot fix your AI visibility overnight. Building a sustainable Share of Voice requires a methodical, phased rollout. Here is the exact timeline and reporting cadence your growth team should follow to execute this framework.

Month 1: Capture Baseline Data

Before you optimize a single page, you must understand your current reality. Your first 30 days are dedicated purely to infrastructure and data collection.

- Determine Optimal Prompt Set Size: Start with 50 to 100 high-intent conversational prompts based on your constraint personas.

- Establish Tracking Infrastructure: Input your prompt clusters into your AI tracking platform.

- Record the Baseline: Document your initial Share of Voice across ChatGPT, Claude, and Perplexity. Identify exactly which competitors currently own your target citations.

Month 2 and 3: Content Transformation

Once you have the data, you begin the optimization sprints.

- Address Query Fan-Out Gaps: Look at the prompts where your brand is completely absent. Create targeted cluster content to answer the specific sub-queries the AI is generating during its synthesis phase.

- Optimize Structured Data: Implement strict Product and FAQ schema across your money pages. Ensure your technical SEO foundation allows AI bots to crawl your site without rendering issues.

- Deploy Citation Triggers: Add comparison tables and embed third-party review data directly into your content. This forces the AI to use your page as the primary source of truth.

The AI SEO Reporting Cadence

To keep your executive team aligned, you must establish a strict reporting structure. Stop reporting on basic traffic. Start reporting on AI visibility metrics.

- Weekly: Prompt Volatility. Monitor how often AI answers change. Look for newly hallucinated pricing or suddenly missing features. This allows your technical team to push immediate fixes.

- Monthly: Share of Voice Trends. Report your aggregate SOV percentage against your core competitors. Show the direct correlation between your content updates and your rising citation rate.

- Quarterly: Pipeline Attribution. Tie your AI citations back to revenue. Analyze your referral traffic from AI platforms and measure the increase in your branded search volume.

Measuring Business Impact Beyond Visibility

Visibility is a vanity metric if it does not translate into revenue.

When a prospect uses ChatGPT to compare your software against a competitor, they are at the absolute bottom of the funnel. If the AI hallucinates your pricing or core features, that prospect is gone. You will never even know they were looking.

Attempting to track this manually is impossible. You cannot have your marketing team spend three hours a day typing variations of your target keywords into ChatGPT to see if you appear. Manual testing is unscalable, prone to personalization bias, and completely blind to the query fan-out process.

You need enterprise-grade infrastructure.

LLMClicks.ai is the required operating system for systematic prompt tracking and performance measurement. It automates your entire query mapping framework. You input your target conversational prompts. The platform tracks your Share of Voice across every major Large Language Model automatically.

More importantly, it detects the exact hallucinations that kill your conversions. If ChatGPT suddenly claims your SaaS product lacks a client portal, LLMClicks.ai alerts your growth team instantly. You get the exact insight you need to fix the citation trigger and win back your pipeline.

Conclusion: Control the AI Narrative

Search has fundamentally changed. Buyers no longer want to click through ten different SaaS landing pages to figure out which tool has the best client portal or SLA management. They want the AI to do the synthesis for them.

If you continue to optimize your content exclusively for traditional keyword intent, you are willingly handing your market share to your competitors.

You must map your content to the exact conversational prompts your buyers are using today. You must engineer the technical citation triggers the AI needs to validate your product. Most importantly, you must measure your success using hard Share of Voice benchmarks instead of outdated traffic metrics.

If you do not map your intent to AI prompts, your competitors will dictate your product narrative.

Stop optimizing for clicks. Start optimizing for citations.

Ready to see exactly what ChatGPT and Perplexity are saying about your software? Start a free baseline AI accuracy audit with LLMClicks.ai today and take control of your pipeline.

Frequently Asked Questions About Prompt Mapping

Q1. What is the exact difference between prompt research and keyword research?

Ans: Keyword research identifies the broad, historical search terms users type into Google to retrieve a list of links. Prompt research identifies the hyper-specific, conversational constraints users feed into an AI to generate a synthesized recommendation. Keyword research optimizes for retrieval. Prompt research optimizes for synthesis.

Q2. How many prompts should I track for effective AI visibility measurement?

Ans: Do not track thousands of generic prompts. Start with 50 to 100 highly constrained, bottom-of-funnel prompts. Focus entirely on the queries where buyers are actively comparing your product to your direct competitors. Tracking 10,000 top-of-funnel informational prompts will dilute your Share of Voice metrics and waste your reporting bandwidth.

Q3. How long does it take to see results from prompt research optimization?

Ans: It depends on your technical infrastructure. If you deploy structured data fixes like Product schema and comparison tables, AI crawlers like GPTBot can parse those citation triggers within 14 to 30 days. However, shifting your aggregate Share of Voice against an entrenched competitor requires consistent cluster content creation. Expect to see measurable pipeline movement in 60 to 90 days.